Editor's Introduction: In 2013, lossy compression is everywhere—without lossy codecs like MP3, Dolby Digital, DTS, A2DP, AAC, apt-X, and Ogg Vorbis, there would be no Web audio services like Spotify or Pandora, no multichannel soundtracks on DVD, no Bluetooth audio, no DAB and HDradio, no Sirius/XM, and no iTunes, to quote the commercial successes and no Napster, MiniDisc, or DCC, to quote the failures. Despite their potential for damage to the music, the convenience and sometimes drastic reduction in audio file size have made lossy codecs ubiquitous in the 21st century. Stereophile covered the development of lossy compression; following is an article from more than two decades ago warning of the sonic dangers.—Editor

Low bit-rate" coding, Peter W. Mitchell

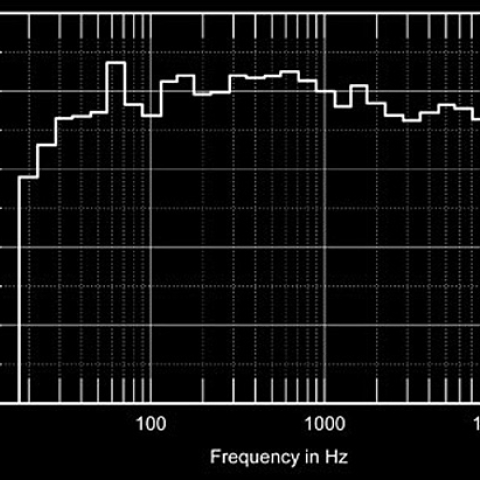

"Low bit-rate" coding is the accepted new jargon for digital bit-rate compression schemes that encode audio with many fewer bits than the 1.4 million bit/second used in the CD (footnote 1). Suddenly these schemes are being discussed and evaluated everywhere. Consumer digital recording systems will use moderate bit rates (384 kilobits/second for the Philips DCC and 300kb/s for the Sony Mini-Disc), reductions of 4:1 and 5:1 from the CD bit rate. Proposed digital audio broadcasting systems will use a bit rate of 192 or 256kb/s for stereo. Other applications may use still lower bit rates, implying more drastic compression schemes and more audible compromises. You may already be listening to some of these. For example, FM stations, which traditionally have used equalized telephone lines to carry the audio signal from the studio to a transmitter several miles away, are changing over to digitally encoded studio-transmitter links. Listening tests to evaluate low bit-rate coding systems have been underway in Sweden for about a year. In the US the Digital Audio Subcommittee of the Electronics Industries Association has launched its own program to evaluate digital bit-rate compression schemes and digital broadcasting systems. Participants in a seminar on low-bit coding at the October AES convention agreed on the importance of caution, to avoid the error of adopting a system that might later be found to have audible flaws. Bart Locanthi, former chairman of the AES digital standards committee, told of receiving a DAT copy of sample low bit-rate recordings that were used in the Swedish listening tests. He discovered false tones in the sound that had escaped detection by all of the listening panels!

Andrew Miller of the Canadian Broadcasting Corporation discussed the CBC's tests of seven low bit-rate systems and played sample recordings made through each. The variations in sound quality were shockingly large. The systems that used a moderate amount of bit-rate reduction reproduced vocal timbres reasonably well. But systems with very low bit rates caused drastic changes in the timbre of something as simple as a male speaking voice, dulling its clarity and adding severe colorations. A recording of a glockenspiel provided an even greater challenge. The systems with the lowest bit rates (below 100kb/s) produced grossly dull and distorted sound. Even the best of the tested systems altered the character of the sound slightly at high frequencies.

Of the seven designs, the one that produced the least sonic impairment was the Dolby AC-2, originally developed for satellite relays of digital audio. An earlier Dolby AC-1 system, which uses adaptive delta-modulation and operates at about half the bit-rate of the CD, has been in widespread use for several years, notably for national distribution of TV sound or networked FM broadcasts. (Historical note: The ADM circuit in the AC-1 system is a refined adaptation of the encoder used in a consumer time-delay ambience simulation system marketed by A.D.S. a decade ago.) The AC-2 system is said to provide similar sound quality with much lower bit rates, 192 or 256kb/s, the same as the European MUSICAM system.

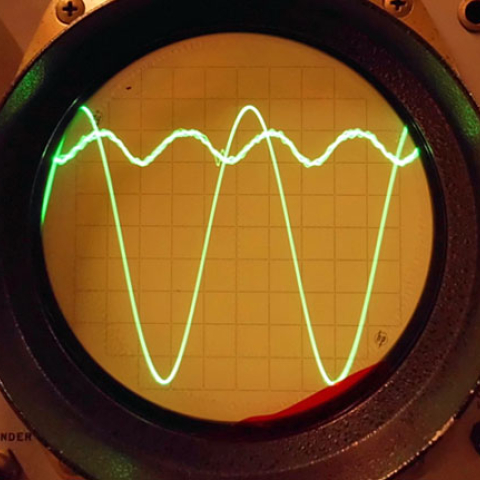

A description of the AC-2 system outlined two reasons for its lack of obvious sonic flaws. One is that its digital filters were matched to the "masking" curve of the human ear with exceptional care, erring on the conservative side to ensure that the noise and distortion products that result from low bit-rate coding will always be inaudible. (Of course, skilled use of masking is nothing new for Dolby; every Dolby noise-reduction system from A to SR has relied on it.) The second factor is Dolby's handling of the transitions between digital data blocks—the points where the amount of data compression is altered according to the level, frequency content, and masking behavior of the incoming signal. Many digital compression systems exhibit a momentary dropout or burst of distortion at those transition points, a problem that Dolby solved with a rapid crossfade between successive data blocks.

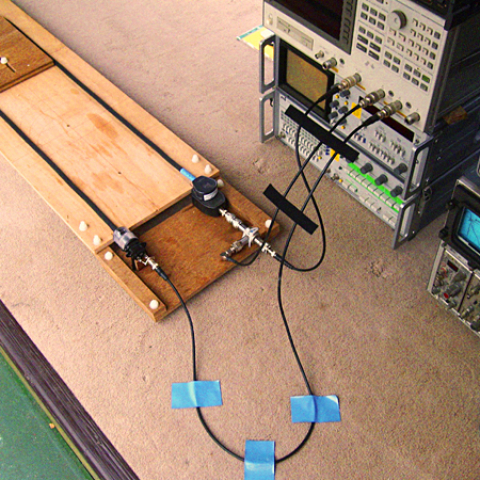

AT&T's T1 long-distance digital transmission standard uses either fiber-optic cable or a special type of coaxial copper cable to provide a bandwidth of 1.5 Megabits/second. With digital data compression, several channels of high-quality audio can be carried on a single T1 line—or a combination of audio and computer data. Lucasfilm uses a T1 line to link accounting computers, a network of computer workstations, and several voice-grade telephone channels from Skywalker Ranch (near San Francisco) to studios in Los Angeles. They have also used Dolby AC-2 encoders to send two channels of wide-range audio over the 400-mile path. When a Dolby SR film soundtrack was encoded, transmitted to LA, and bounced back to Skywalker Ranch for comparison with the source signal, the sound was found to be nearly indistinguishable.

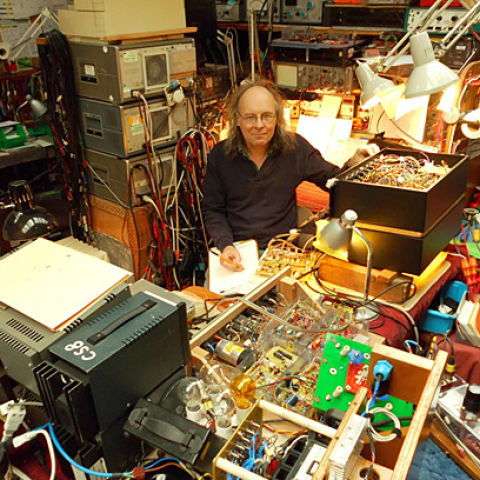

Lucasfilm plans to lease additional T1 lines and send all 24 channels of a soundtrack to LA for remote mixing. Other plans will give the term "remote recording" a new meaning: instead of transporting a truckload of equipment to record a concert in a church, just hang microphones, preamps, and AC-2 encoders to transmit line-level signals back to the studio and record them there while monitoring the sound in a familiar acoustic environment.—Peter W. Mitchell

Lossy Images of Audio, Robert Harley

In addition to the Audio Engineering Society's twice-yearly conventions, the organization holds special conferences on particularly timely audio topics. "Images of Audio," the 10th International conference, was held in London this past September. Although the conference's theme was audio for visual images (HDTV in particular), most of the presentations and discussions were about what's happening at the cutting edge of digital audio in general. The 17 technical presentations covered everything from digital audio in video recorders to sample-rate conversion. There was also a series of papers on bit-rate reduction and a panel discussion on these schemes, described in detail below.

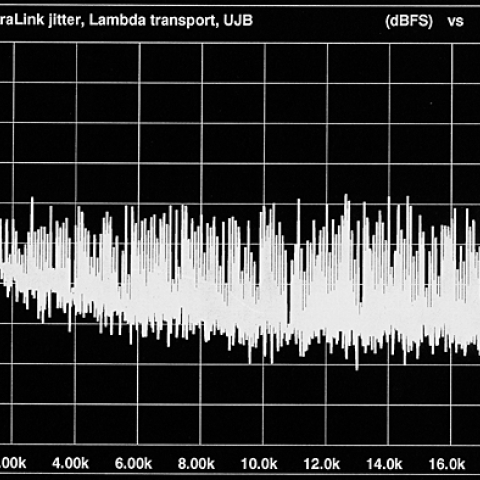

The conference was unique in that it included a one-day tutorial on digital audio. Malcolm Hawksford's superb introduction included jitter, oversampling, noise-shaping, filters, and aliasing. In addition to being one of our foremost experts on digital audio, he is an excellent teacher and presenter of technical information.

During his discussion of jitter, Dr. Hawksford mentioned that "we read in magazines about attempts to reduce jitter—like the green pen on a CD." This brought howls of laughter from the audience. Dr. Hawksford quickly admonished the audience with a pointed finger and this response: "Don't be so quick...There may be more to this than you think."

Taking a decidedly contrary attitude was John Watkinson, author of six books on digital audio and video including the excellent The Art of Digital Audio (Focal Press). The thrust of his talk on digital audio data storage was that digital audio storage devices should be thought of exactly as computer data storage devices. He said that digital audio data is identical to data stored on a floppy disc, his American Express card's magnetic stripe, and other forms of data storage. I found Mr. Watkinson's insights into the fundamental principles of digital audio fascinating, but he took the opportunity to attack audiophiles, saying essentially that if the ones and zeros are the same, the sound must be the same: "Somehow I can't conceive of an audiophile [binary] 'one'."

Footnote 1: For more complete discussions of data compression, see "As We See It" in April 1991, "As We See It" in May 1991, "As We See It" in July 1994, and Tom Norton's discussion of the DTS codec in March 1995.—John Atkinson

"Low bit-rate" coding is the accepted new jargon for digital bit-rate compression schemes that encode audio with many fewer bits than the 1.4 million bit/second used in the CD (footnote 1). Suddenly these schemes are being discussed and evaluated everywhere. Consumer digital recording systems will use moderate bit rates (384 kilobits/second for the Philips DCC and 300kb/s for the Sony Mini-Disc), reductions of 4:1 and 5:1 from the CD bit rate. Proposed digital audio broadcasting systems will use a bit rate of 192 or 256kb/s for stereo. Other applications may use still lower bit rates, implying more drastic compression schemes and more audible compromises. You may already be listening to some of these. For example, FM stations, which traditionally have used equalized telephone lines to carry the audio signal from the studio to a transmitter several miles away, are changing over to digitally encoded studio-transmitter links. Listening tests to evaluate low bit-rate coding systems have been underway in Sweden for about a year. In the US the Digital Audio Subcommittee of the Electronics Industries Association has launched its own program to evaluate digital bit-rate compression schemes and digital broadcasting systems. Participants in a seminar on low-bit coding at the October AES convention agreed on the importance of caution, to avoid the error of adopting a system that might later be found to have audible flaws. Bart Locanthi, former chairman of the AES digital standards committee, told of receiving a DAT copy of sample low bit-rate recordings that were used in the Swedish listening tests. He discovered false tones in the sound that had escaped detection by all of the listening panels!

In addition to the Audio Engineering Society's twice-yearly conventions, the organization holds special conferences on particularly timely audio topics. "Images of Audio," the 10th International conference, was held in London this past September. Although the conference's theme was audio for visual images (HDTV in particular), most of the presentations and discussions were about what's happening at the cutting edge of digital audio in general. The 17 technical presentations covered everything from digital audio in video recorders to sample-rate conversion. There was also a series of papers on bit-rate reduction and a panel discussion on these schemes, described in detail below.

Footnote 1: For more complete discussions of data compression, see "As We See It" in April 1991, "As We See It" in May 1991, "As We See It" in July 1994, and Tom Norton's discussion of the DTS codec in March 1995.—John Atkinson