| Columns Retired Columns & Blogs |

Digital Data Compression: Music's Procrustean Bed

Procrustean bed: a scheme or pattern into which something or someone is arbitrarily forced.

Procrustes: a villainous son of Poseidon in Greek myth who forces travelers to fit into his bed by stretching their bodies or cutting off their legs.—Webster's Ninth New Collegiate Dictionary

Footnote 1: The terms "bit-rate reduction" and "data compression" refer to the same thing. Proponents of the concept prefer bit-rate reduction, while skeptics and critics tend to use the term data compression. See last month's "As We See It" and "Industry Update" columns for discussions of data compression.

Procrustes: a villainous son of Poseidon in Greek myth who forces travelers to fit into his bed by stretching their bodies or cutting off their legs.—Webster's Ninth New Collegiate Dictionary

In most fields of scientific endeavor, advancing the state of the art is the primary goal of researchers and academics. From computer science to medicine to astronomy, technological frontiers are continually being pushed forward—and with astounding results. We can now "walk" through a building that exists only in the architect's computer, splice together the building blocks of life in a laboratory, and take close-up photographs of the outer planets. These achievements will undoubtedly be eclipsed by even more remarkable developments as mankind continually strives to extend the limits of his emerging technological power. If "necessity is the mother of invention," then "dissatisfaction is the father of progress."

There is one field of scientific inquiry, however, where the goal is not the advancement of absolute performance, but of finding ways to make existing, limited technology commercially exploitable—even at the expense of compromising quality. Unfortunately, this field is a hot new area of research in digital audio encoding. Called "bit-rate reduction" or "data compression," this is a scheme whereby the data rate for a digital audio signal is reduced by over 80%, accomplished partly by employing a more efficient encoding scheme, but primarily by throwing out a large amount of musical information judged to be inaudible (footnote 1).

At the Audio Engineering Society convention in Paris this past February, I had a glimpse of the role data compression may play shaping audio's future—and the prospects are frightening. There is a juggernaut moving with tremendous momentum toward implementing data-compression schemes in virtually all aspects of music storage and transmission. Bit-rate reduction systems are the foundation on which many future audio technologies are based, from Philips's Digital Compact Cassette (DCC) to Digital Audio Broadcasting (DAB), to cinematic soundtracks, and even a CD with extended playing time.

Even more disturbing is the prospect that data compression may be used in professional applications to make master recordings. It's conceivable that the majority of recorded music will be subject to some form of data compression in as little as ten years. Consequently, data compression is not merely a mass-market mid-fi system avoidable by the serious listener. Like it or not, we will all be subject to bit-rate–reduced digital audio.

Before discussing the implications of data compression, let's look at why such a contrivance is necessary for greater commercial exploitation of digital audio.

Conventional 16-bit linear PCM digital audio with a 44.1kHz sampling rate (as found on a Compact Disc) requires 705,600 bits, or 705.6 kilobits, per second per monaural channel (705.6kb/s/ch). This number is obtained by multiplying the sampling rate (44,100) by the quantization word length (16). The stereo signal on a CD thus consumes 1.41 million bits per second, or about 10.6 megabytes per minute (1 byte = 8 bits). And this is just the raw audio data, which comprises only about a third of the CD's storage capacity (the rest is encoding, error correction redundancy, subcode, etc.). For comparison, this essay you are now reading consumes 23,000 bytes of storage, about the same amount of data consumed by 1/60 of a second of CD-quality stereo digital audio. Clearly, 16-bit PCM audio involves a huge amount of data, creating a storage and transmission bottleneck—from a commercial point of view.

To store or transmit such a large amount of data requires mass storage capacity or a wide transmission bandwidth channel. Mass storage and wide bandwidth mean high cost. High cost means precluding mass-market applications. Precluding mass-market applications means little profit for the companies selling new hardware. Consequently, a whole industry with enormous profit potential is developing around bit-rate–reduced digital audio systems—an industry that would not be possible without this drastic reduction in the digital audio data rate.

In addition to Philips's Digital Compact Cassette (DCC), which uses PASC, a type of data compression (see "Industry Update" in April, footnote 2), a massive project is underway in Europe to replace FM radio transmission with Digital Audio Broadcasting (DAB). In DAB, many radio stations' signals are multiplexed together and broadcast from a satellite to consumers' digital "tuners." By reducing the data rate of a digital audio signal, more stations can be squeezed into a narrower bandwidth, reducing cost. There is a direct and inviolable correlation between transmission cost and bit rate.

With digital audio broadcasting made possible by reducing the bit rate, a whole new demand for consumer products is suddenly created. It doesn't take a marketing genius to realize that DAB will make an entire generation of hardware (all radio receivers, including car stereos) obsolete, forcing consumers to replace their hundreds of millions of existing units.

But how can the musical information represented by 705.6kb/s/ch (which many argue isn't nearly enough) be squashed down to 128kb/s/ch without seriously degrading the music? Although the ratio between the digital audio data rate from a CD and that used in data compression schemes is huge (5.5:1), the picture isn't quite as bleak as those numbers would suggest. More efficient encoding techniques are employed, like sampling low frequencies at a slower rate, and allocating bits based on the signal's spectral content.

Fundamentally, however, data-compression techniques are based on a psychoacoustic phenomenon called "auditory masking," which is defined as "decreased audibility of one sound due to the presence of another." When exposed to two signals, the ear/brain tends to hear only the louder. A good example of this is how tape hiss or record-surface noise becomes apparent only during quiet passages or spaces between tracks. The tape hiss is always present at the same level, but is masked by the music most of the time. Although auditory masking has been well researched (primarily by experimental psychologists), there are many unanswered questions, especially about how the phenomenon relates to musical perception; virtually all masking research is based on steady-state test signals and noise, not music.

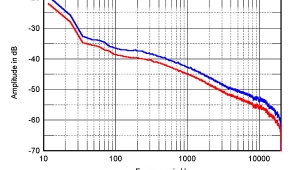

One approach to bit-rate reduction is called "sub-band coding," in which the audio spectrum is split into multiple bands (32 bands in the case of Philips's PASC encoding used in the forthcoming Digital Compact Cassette), and bits are allocated based on the amount of signal in particular bands. Low-level information in a band that also contains high-level signals would be ignored by the encoder because the high-level signal would mask the low-level signal. Bands with little energy are allocated fewer bits, while those with higher energy are assigned more bits. Whatever the technique, all data-compression systems produce very large measurable errors in the signal—errors presumably masked by the correctly coded wanted signal. Just as tape hiss represents an error in analog magnetic tape recording, it is masked by the relatively error-free wanted signal of music.

All data-compression systems are based on the current masking theory that has produced the "auditory masking threshold" curve. At the Paris AES Convention, Michael Gerzon presented a paper entitled "Problems of Error Masking in Audio Data Compression" asserting that the current spectral masking theory is flawed (footnote 3). According to the paper, when the error is highly correlated with the signal, the masking threshold can be reduced by as much as 30dB. He backs up his theory with extensive mathematics. If he is correct, all the proposed data-compression systems (which rely on traditional spectral masking thresholds) are fundamentally and fatally flawed.

In addition to the prospect that data-compression schemes are based on incorrect human hearing models, there are many real-world dangers of bit-rate reduction. It seems to me that the systems have been pushed to the very limits of "acceptability," with "acceptability" determined under ideal laboratory conditions. In the real world, any spectral or dynamic irregularities in the playback system, storage media, or transmission chain will unmask the gross errors present in the signal. The large frequency-response irregularities found in car stereos, for example, could skew the spectral content of the signal, thus revealing the enormous errors hiding beneath the wanted signal. I wouldn't be surprised if there were an official mandate banning graphic equalizers on Digital Audio Broadcasting car stereos!

Similarly, an important question is what the signal-processing devices commonly used in broadcasting do to a signal that has undergone data compression. Most people would be shocked to learn of the great number of compressors, expanders, equalizers, pitch shifters, time compressors, etc. in a broadcasting chain. In an AES workshop on DAB, one audience member recounted finding fifty processing devices in the broadcasting chain between the original signal and the consumer's tuner. How do these devices affect the delicate balance between the huge underlying error and the wanted signal?

Another fear is of the effects of multiple encoding/decoding cycles. What happens to a bit-rate–reduced signal that is decoded, then re-encoded with bit-rate reduction, and so forth over several generations? It can't be good. This is a very likely scenario in the broadcasting chain as signals are transmitted, decoded, stored, and re-encoded for later use. To the consumer playing back a DCC recording of a DAB signal, there are already two encode/decode cycles, if the signal through the entire broadcasting chain underwent only one encoding process. The information loss must increase with successive generations, perhaps even degrading the signal exponentially.

And what about concealing transmission errors? All digital audio systems experience loss of data that must be corrected or concealed. Clearly, traditional methods of error concealment like linear interpolation (replacing the missing data with an average of surrounding valid samples) are inadequate for compressed-data digital audio.

The degradation imposed by multiple generations creates a profound irony: data compression may succeed where Copycode failed. Copycode, you may recall, was the proposed scheme whereby all copyrighted music would have a narrow notch removed from the midband, the lack of energy at that frequency disabling a recording device's record function, thus preventing consumers from making a tape copy. Because data compression introduces potentially severe errors with multiple encode/decode cycles (not to mention the degradation introduced by data compression itself), it may become an effective—if unplanned—method of discouraging home taping.

Even though these are serious concerns, what really scares me about digital-audio data compression is the potential for professional abuse. It's one thing to compress signals for digital-audio broadcasting or storage on DCC, but quite another if it is applied to master recordings. If that happens, musical information will be irretrievably lost. During every paper, workshop, or discussion regarding data compression I've attended, the word "archival" has surfaced as an application of these techniques. Archiving musical performances with bit-rate–reduced digital audio is not only unconscionable, but strains my ability to comprehend the type of mentality that would even consider such an abomination. It just doesn't make sense. The commercial benefits are virtually nil: recording media aren't that expensive. Preserving our musical heritage for future generations should be done with the best possible methods, not the cheapest or most convenient.

If data reduction is already being proposed for archival uses—where the financial gains are marginal at best—there will be little hesitation to implement it in professional applications where the commercial benefit is far greater. Indeed, Solid State Logic, the British manufacturer of perhaps the most expensive and prestigious recording consoles in the world, has already developed a data-reduction system called Apt-X 100.

More and more music is being recorded in "tapeless studios" on digital audio "workstations" that record individual tracks on large hard disks. Digital audio workstations allow the recording, editing, and signal processing of music in a desktop computer environment. We remember from our previous discussion that 16-bit, 44.1kHz digital audio consumes 705.6kb/s/ch. With many of today's recordings using 48 tracks or more, we can see the voracious appetite digital audio has for hard disk space. Assuming an hour's worth of music recorded over 48 tracks (not an uncommon situation), plus another hour's worth of 2-track space to which the 48 tracks are mixed, we find ourselves needing 127 billion bits, or nearly 16 gigabytes (16,000 megabytes) of hard-disk storage. Anyone who has priced large hard-disk drives can relate to the huge cost of such a capacity. In addition, this large amount of data requires very fast (read expensive) drives since the data is spread over many disks and must be accessed with a minimum of interruption.

Now, consider the same time and channel requirements, but with a data rate of 128kb/s/ch. Rather than needing 16 gigabytes, we now need only 2.9 gigabytes. In addition, compressed audio data means the drives can be much slower (read cheaper), since the data is spread over an area five times smaller and the effective read/write rate is five times faster. These figures won't be lost on digital audio workstation manufacturers who are caught in the race to offer the most number of tracks and recording time at the lowest cost. Many professional users tend to value features, flexibility, and return-on-investment potential over sound quality. Moreover, the encoding and decoding chips will be relatively cheap if they are the same ones used in consumer applications.

Another factor that could fuel the rush to incorporate data reduction into professional applications is the emergence of the MO (Magneto-Optical) disk, a technology destined to supplant traditional hard disks. MO disks are on their way to offering greater storage capacity for less money. However, they have one drawback: MO disks are now too slow for uncompressed digital audio. By compressing the data, however, MO drives becomes fast enough—and will be much cheaper than magnetic disks on a cost-per-megabyte basis. Unlike magnetic disk drives, MO has removable media: recording new material means replacing the disc rather than erasing the previous information. MO's many advantages may be a motivating factor in implementing data compression in professional equipment.

Looking one more step into the future, data compression figures even more prominently in another technology we're likely to see in the next decade: Random Access Memory (RAM) digital audio storage. In RAM storage, the ones and zeros that represent music are put on a memory chip (recording) and can be read out later (playback)—all with no moving parts. The advantages of RAM storage are many: no wear, no servo mechanisms, very few (if any) data errors, and high resistance to damage. The day may come when music is recorded on, and played back from, silicon.

However, with a 1Mb DRAM chip costing around $4, the high cost of RAM storage is prohibitive—at today's uncompressed data rates. Bit-rate reduction will look awfully tempting to RAM digital storage system designers: data compression reduces the cost of RAM storage by a factor of 5.5, the ratio between 16-bit 44.1kHz representation (705.6kb/s/ch) and compressed representation (128kb/s/ch). With proposals of 64kb/s/ch rates being advanced today, there may be a race to implement lower and lower data rates to accommodate the limitations of new technologies like RAM storage—and all at the altar of price and corporate profits, not musical performance. Every time a format is made obsolete, the manufacturers and marketers of the replacement technology sustain their existence for several decades because of demand for the new hardware. Just as it has been with the CD replacing the LP, so it will be with the analog cassette and DCC, FM radio and DAB, and eventually CD and RAM storage.

What's so worrisome about this trend is that the goalposts are being moved—in the wrong direction. Instead of striving to better create the illusion of live music, research efforts are dictated by the multinational corporations' need for convenient and cheap methods of storing and transmitting "software." Despite the remarkable and laudable achievements made in this field, bit-rate reduction in its proposed form and application is a step backward, a regression—even a perversion of audio science. It represents a denial of the vital role fidelity plays in communicating the musical experience. "Just good enough" or "barely detectable" appears to be the pinnacle of achievement. Moreover, the whole concept of data compression is a fundamental reversal of where our priorities should be. Audio technology should conform to the requirements of music rather than making music conform to technological limitations.

Ironically, today Compact Disc—with all its sonic flaws—is used as the reference standard against which bit-rate–reduced audio is judged. Will tomorrow's audio technologies-of-convenience use compressed digital audio quality as the standard for which to strive?

In principle, bit-rate reduction is a worthwhile endeavor. If more efficient coding schemes can be developed, and there truly is information completely masked by other signals, the data saved should be reallocated to improve, say, low-level resolution, rather than thrown out to serve commercial ends. More important, the idea of using data-compression techniques in professional equipment to make master recordings is an appalling abuse of the whole concept.

It would be a supreme irony if, after several years of listening to compressed digital audio, people start enjoying music less without knowing why. Instead of listening to entire performances, they listen to single tracks, thinking about what they will do when the music's over. Suddenly, music is less interesting, less involving, less moving. Because people enjoy music less, they buy less hardware and software, leading to the demise of the very companies that set in motion this tragic spiral.

The future of recorded music needn't be so bleak. I can imagine a far different scenario: Suppose the companies with huge research facilities and budgets who are now developing data compression instead devote their considerable skills and knowledge to uncovering the vast unexplored mysteries of human musical perception as it relates to recording and playback systems. New measurements would be devised that correlated exactly with perceived qualities. Performance aspects such as soundstage depth, bloom, and liquidity could be quantified and measured. Audio design would no longer have aspects of a black art. With the mysteries solved, even moderately priced systems would outperform today's high-end components.

The result would be mass-produced playback systems that better conveyed the expression and emotion of the composer and performers—the essence of why we listen to music. Without knowing why, the general public would find music listening more satisfying and rewarding. And because music would assume greater importance in their lives, people would spend a larger portion of their disposable income on recorded music and playback hardware, creating a self-perpetuating upward spiral in sales. Everyone would be an audiophile. Consequently, the electronics giants who brought better performance to music playback would reap the rewards of this greatly expanded market and enjoy unprecedented prosperity. It's too bad they will never see it that way.

It's ironic that, in this age of astounding scientific achievements, we feel that music is somehow unworthy of the best technology we can provide. Rather than creating technology that accommodates the requirements of music, we arbitrarily force music to conform to our self-imposed, profit-motivated technological limitations.

Procrustes would be proud.

Footnote 1: The terms "bit-rate reduction" and "data compression" refer to the same thing. Proponents of the concept prefer bit-rate reduction, while skeptics and critics tend to use the term data compression. See last month's "As We See It" and "Industry Update" columns for discussions of data compression.

Footnote 2: Track 14 on Stereophile's Test CD 3 allows listeners to compare PASC-encoded and DTS-encoded versions of the audio data with the original uncompressed version.—Ed.

Footnote 3: Michael Gerzon is the inventor of (among other things) Ambisonics, and is a man with brilliant insights into audio reproduction. He is a former professor of theoretical mathematics at Oxford University and is now an independent audio technical consultant. He applies his formidable mathematical prowess in the study of music reproduction and to support his audiophile-leaning theories. At the October 1991 AES convention, he intends to present a paper showing mathematical evidence that A/B testing is fundamentally flawed as a tool for revealing audible differences.

- Log in or register to post comments