| Columns Retired Columns & Blogs |

The Highs & Lows of Double-Blind Testing Page 9

While the significance test technique used by Greenhill and Clark is reasonable and conventional for their data, nevertheless there are mathematically derivable implications and consequences of using this technique to make decisions, consequences with which most audio engineers, including Mr. Clark, are apparently unfamiliar. All I did was to point out some of the more important implications, those regarding Type 2 error and statistical power. These implications can be found in most elementary textbooks on statistics and are not at all controversial. If Mr. Clark does not like the implications of the technique he chose, he shouldn't blame the messenger for the message.

Footnote 5: HFN/RR, January 1986

Closing: Mr. Clark and others are responsible for valuable advances in the science of listening tests. But the statistical methodology employed by Mr. Clark and just about everyone else has not kept pace. In this regard, I would be greatly displeased if anyone used my writings to single out Mr. Clark for criticism of his statistical techniques. My paper was addressed to the field as a whole. I would also be greatly displeased if anyone used my writings to single out double-blind experimenters for criticism from others in the "great debate". I was able to mount a precise and mathematically derivable criticism of their statistical techniques only because they had first taken pains to search out mathematically rigorous and methodologically repeatable methodological techniques. I believe this to be a great improvement over what came before. And so I ask Mr. Clark and other double-blind experimenters to take my writings in the spirit in which they are offered: to make already good work better.—Les Leventhal

John Atkinson Adds His Two-Cents Worth

As someone who has both organized and taken part in many blind tests, I would like to comment on both Mr. Clark and Mr. Leventhal's letters. I am astonished that David Clark attempts to deny that the null results produced by tests using an inadequate number of trials organized by him and by others have not been used to state that no differences exist. Indeed, a complete audio subculture has been called into existence, the members of which are adamant that all amplifiers sound the same, that all cables sound the same, that all pickup cartridges sound the same (apart from tonal balance differences), and who incidentally feel that the sound of CD is the greatest thing ever to hit their homes, as they can't be convinced of the audibility of the audible problems.

It would seem that the crux of Mr. Leventhal's AES paper was that where the magnitude of possible differences is small—which is not the same as saying that they are unimportant—the tester has two choices if he is not to waste his time. Either he uses a large number of trials, or, by making use of Mr. Leventhal's work, he acknowledges the inadequate power of the statistics when the number of trials is small.

Mr. Clark's position could be summarized in this way: if one listener gets 10 identifications correct out of 16 presentations, then no difference was heard, 12 being needed for the standard 95% confidence level. The results must have been due to chance. Mr. Clark then goes on to say that to use more listeners is impractical—"large numbers of qualified listeners are hard to find"—and that he would like to use the results from this test as hard data. As Mr. Leventhal notes, however, standard statistics indicate that if you use a larger number of people, say, 300, who each then get that same score of 10 correct out of 16, then this constitutes incontrovertible evidence for a difference to exist. And if the 300 listeners average out at 8 out of 16, then this is hard data that no difference was heard. Mr. Leventhal's AES paper attempted to provide guidance for the likes of Mr. Clark who, for various reasons, want to use what would normally be considered an insufficient number of test subjects.

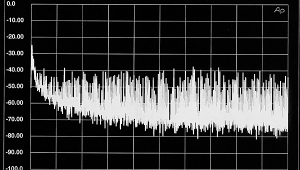

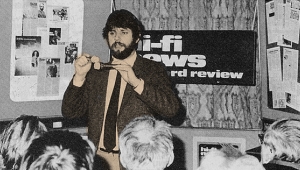

In the blind listening tests carried out when I was working for Hi-Fi News & Record Review, I found audible changes could be introduced by reversing the Absolute Phase of the signal (footnote 5), by inserting an electrolytic capacitor into the signal path (footnote 5), and by inserting a Sony PCM-F1 digital processor in 14-bit A-D/D-A mode (footnote 6). In all these tests, a large number of subjects—up to 300 people, each listening to several presentations—took part, and I would suggest that this is why these results were positive. Similarly, Martin Colloms organized a blind test for HFN/RR (footnote 7), the results of which revealed differentiation by ear of two amplifiers which measurements predicted would be subjectively identical. Again, a correlation between a positive result and a large number of listeners, in this case nearly 100 engineers and students at a British AES meeting last December. (The listening test was supervised by AES officials to ensure that the conditions were sufficiently rigorous.)

As I see it, the real crux of the matter is that so much of the so-called "objective" testwork carried out in the field of hi-fi reproduction, at least as far as the testing of actual products is concerned, produces null results. The people organising the tests do not want to admit that their time has been wasted, so do not want to admit that the results are meaningless. In addition, the listening conditions in such tests, as indicated by Mr. Clark, are far removed from the manner in which people usually enjoy reproduced music.

When two products which so obviously differ when listened to on a casual basis—"casual" in the sense that the listener has no bias towards hearing or not hearing a difference—cannot be distinguished in a test, then I would suggest that, much as it upsets Mr. Clark, the only conclusion to draw is that the test is flawed. The beauty of Mr. Leventhal's paper is that it suggests reasons as to why this should be the case. And frankly, I find it incomprehensible that Mr. Clark holds up what is possibly the main reason for producing null results, the unnatural listening conditions, as an excuse for not increasing the statistical power of his tests.

In my opinion, too many of the so-called "objective" testers retreat behind an academic smokescreen to disguise the fact that all that can be concluded from their double-blind tests is that under the limited circumstances, no difference could be heard. As far as the real world of equipment reviews is concerned, then until those inhabiting the academic stratosphere develop the analytical tools capable of doing the job, "informed" subjective reviewing, as practised by the likes of J. Gordon Holt, Anthony H. Cordesman, Martin Colloms, and—dare I mention his name?—Harry Pearson, does best at giving the readers of magazines such as Stereophile the hard information they need.—John Atkinson

Footnote 5: HFN/RR, January 1986

Footnote 6: HFN/RR, May 1984

Footnote 7: HFN/RR, May 1986

- Log in or register to post comments