| Columns Retired Columns & Blogs |

The Highs & Lows of Double-Blind Testing Page 7

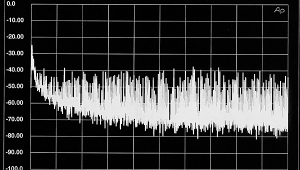

The Example: Since most of the points to be discussed are statistical, it will be helpful to focus on a concrete example. Consider Greenhill and Clark's assessment of the McIntosh MC 2002 amplifier in Audio (April '85, pp.56-60). The McIntosh was compared in a double-blind test to another amplifier, apparently the Levinson ML-9. The listener (Greenhill) correctly identified "the randomly selected amp in only 10 out of 16 trials," a rate which failed to reach "the desired 95%" level of significance." On p.96 of the same issue of Audio, Greenhill and Clark discuss their methodology and state: "Using published tables of the binomial statistical distribution, we have calculated the likelihood of correct scores for each listening test. We found that obtaining a score of 12 correct out of 16 trials gives a confidence level of 95% or more that the outcome was not due to chance. Results at this confidence level are the minimum commonly accepted by sciences such as medicine before they're judged to be statistically significant." (Greenhill and Clark use the expression "95% level of significance or confidence" in many of their writings. It is more common to use the expression ".05 level of significance," which I used in my previous writings.)

Greenhill and Clark's conclusion regarding listening tests with the McIntosh (p.60) state: "The controlled tests, however, reveal no significant sonic differences (below clipping) between the McIntosh and the reference ML-9 amplifier, confirming Clark's belief that well-designed amplifiers today differ little sonically." (emphasis added)

Using More Trials or Listeners: Mr. Clark complains that there are practical problems in following my advice to increase N. (I wrote that a larger N will (a) decrease Type 2 error, which (roughly) is the probability that the statistical analysis will find the data to be nonsignificant when audible differences in fact occur, and (b) increase statistical power, which, again roughly, is the probability that the statistical analysis will find the data to be statistically significant when audible differences in fact occur.) The practical problems described by Mr. Clark are indeed serious, as I acknowledged at the end of my letter. So I am not really advising him to increase N. Indeed, without knowing what research question an experimenter is asking and how important Type 2 error—or power—is to the project, I can provide no advice whatever on N! I can only inform the experimenter of the statistical fact that Type 2 error increases and statistical power decreases as N decreases, and he can take it from there.

I do advise, however, that if a "great debate" study is intended, one whose results are intended to determine, for example, whether amplifiers sound alike or have at least small-to-moderate audible differences, then a large N should be used to allow nonsignificant findings to be interpretable as evidence that amplifiers sound alike. If practical problems force the experimenter to use a small N, then the experimenter should tell the reader that statistical power was so low (or Type 2 error risk so high) that small-to-moderate audible differences have only a small probability of resulting in significant findings; hence, nonsignificant findings should be viewed as uninterpretable. This contrasts with Mr. Clark's small-N study of the McIntosh amp, where nonsignificant findings were interpreted as "confirming Clark's belief that well-designed amplifiers today differ little sonically."

I do not criticize Mr. Clark for taking into account practical problems when selecting N. But statistical equations for calculating Type 2 error and power do not care about practical problems. Nor will blaming me for telling him about Type 2 error and power solve his problem. Whatever the decision regarding N, however, it would be valuable to inform the reader about the Type 2 error or statistical power of the analysis rather than sweeping it under the rug. The table generated for my paper will allow this information to be obtained without calculation.

Thousands of Comparisons: Mr. Clark states that Leventhal fails "to acknowledge... that our tests actually do use a very large number of trials..." "A single listener may make over 1000 comparisons in the course of arriving at the 16 decisions we call 'trials'." Mr. Clark needs to read his own report of his analysis (above). The statistical analysis he chose to perform above was based on the 16 decisions he calls trials, not on the many comparisons that may have been required for each trial. And Mr. Clark has chosen to base his conclusions about the audibility of differences (see italicized passage) on his statistical analysis, not on the many comparisons. Hence, it is quite appropriate for me to describe the statistical implications of an analysis based on only 16 trials, namely, large risk of overlooking small-to-moderate audible differences (large Type 2 error) and low probability of finding them (low statistical power).

And it is statistically and methodologically incorrect for Mr. Clark to imply that his statistical analyses or conclusions are made trustworthy by the large statistical power resulting from an N of thousands rather than jeopardized by the small statistical power resulting from an N of 16.

Power Reversed: Mr. Clark states, "Let's suppose we altered our statistical criteria, as Leventhal suggests, so that it would be possible to conclude with some certainty that a difference was not heard in a test." (This amounts to reducing the risk of Type 2 error.) Mr. Clark asks, "What might we accomplish and what is the price we have to pay?" Mr. Clark says we accomplish very little because of problems in generalizing findings of inaudible differences to other listeners, equipment, and so on.

Mr. Clark ignores the methodological fact that problems of generalizability of findings do not normally depend upon the Type 2 error rate of the experiment. So reducing Type 2 error will not normally affect generalizability of findings. (There is, however, an exception. There are many ways to reduce Type 2 error; but if Type 2 error is reduced by increasing N, then generalizability to other listeners will, if anything, increase.) Furthermore, if the experiment is so designed that findings of inaudibility cannot be generalized, then findings of audibility normally will suffer similar problems of generalizability. Accordingly, if generalizability of findings is important, then Mr. Clark should consider not running the experiment, regardless of Type 2 error.

- Log in or register to post comments