| Columns Retired Columns & Blogs |

The Highs & Lows of Double-Blind Testing Page 3

The statement "the number of correct identifications observed indicates that differences are audible at the .05 level of significance" roughly means the following: for a listener who cannot hear differences, there is no more than a .05 probability that he will by chance make as many or more correct identifications as the number actually observed. Were the same listening test repeated infinitely with this listener, no more than 5% of the replications would provide statistically significant data leading to the incorrect conclusion that the subject heard differences. In this instance, the probability of mistakenly concluding that inaudible differences are audible is, at most, .05. This error is called a Type 1 error; the probability of Type 1 error is limited to the significance level selected for the significance test (in this case, .05). By selecting a suitable significance level during the data analysis, the experimenter can select a risk of Type 1 error he is willing to tolerate.

Why not select the smallest possible significance level to test the data, thereby producing the smallest possible risk of Type 1 error? Because, as one reduces the risk of Type 1 error, the risk of Type 2 error increases. Type 2 error consists of mistakenly concluding that audible differences are inaudible. Mr. Archibald, in his reply to reader Huss, has in effect expressed a fear that Type 2 error is likely with the ABX comparator. It is my contention that Type 2 error is likely when small-trial listening tests are analyzed at the .05 significance level. The ABX comparator itself is probably innocent if it is as good a device as Mr. Holt, and others, have reported.

The risk of Type 2 error increases, not only as you reduce Type 1 error risk, but also with reductions in the number of trials (N), and the listener's true ability to hear the differences under test (p). (All of this is explained in great detail in Leventhal, 1985.) Since one really never knows p, and one can only speculate on how to increase it (eg, by carefully selecting musical selections and ancillary equipment to be used in the listening test), one can reduce with certainty the risk of Type 2 error in a practical listening test only by increasing either N or the risk of Type 1 error. (Remember, Type 1 error risk can be deliberately increased by selecting a larger significance level for the significance test which simultaneously reduces Type 2 error risk.)

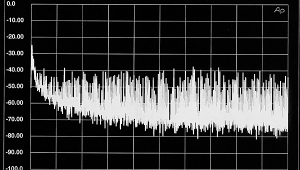

End of background. (If you never had a statistics course, you must now be developing a migraine.) To now make my point that small-trial (small-N) listening tests analyzed at the .05 significance level lead to large Type 2 error risks I need to cite actual calculated examples. Fortunately, tedious statistical calculation can be avoided by using a table I generated (fig.1) for my AES paper (Leventhal, 1985). The table applies only to listening tests which require the listener, on each trial, to choose between two alternatives. In this kind of trial the probability of correct choice by chance is .5, as with the flip of a coin. Since reader Huss refers to a 16-trial listening test reported in Audio, I will begin there with a detailed example of how to use the table, excerpts of which appear below.

Fig.1:

| Type 1 Error | Type 2 Error | |||||

|---|---|---|---|---|---|---|

| N | r | p=.6 | p=.7 | p=.8 | p=.9 | |

| 16 | 13 | .0106 | .9349 | .7541 | .4019 | .0684 |

| 16 | 12 | .0384 | .8334 | .5501 | .2018 | .0170 |

| 16 | 11 | .1051 | .6712 | .3402 | .0817 | .0033 |

| 16 | 10 | .2272 | .4728 | .1753 | .0267 | .0005 |

| 16 | 9 | .4018 | .2839 | .0744 | .0070 | .0001 |

| 50 | 32 | .0325 | .6644 | .1406 | .0025 | .0000 |

| 50 | 31 | .0595 | .5535 | .0848 | .0009 | .0000 |

| 100 | 59 | .0443 | .3775 | .0072 | .0000 | .0000 |

| 100 | 58 | .0666 | .3033 | .0040 | .0000 | .0000 |

This table indicates the minimum number of correct identifications (r) for concluding that performance is better than chance, and resulting Type 1 and Type 2 error probabilities for p values from .6 to .9 in listening tests with 16, 50, and 100 trials (N). Selecting the ".05 level of significance" amounts to selecting an r value for which Type 1 error approaches, but does not exceed .05. (Excerpted from Leventhal, 1985.)

Suppose an investigator wishes to use the table to analyze the results of a 16-trial (N16) listening study. What is the minimum number of correct identifications (r) that the investigator should require of the listener before concluding that performance is statistically significant (ie, that differences are audible)? The table shows that the probability of Type 1 error (falsely concluding that inaudible differences are audible) will be .0384 if the investigator requires a minimum r of 12. If the probability of Type 1 error is not to exceed .05, our investigator must choose an r of 12 for the listener to meet or exceed.

But, with the selection of an r of 12, the table shows that the probability of Type 2 error (concluding that audible differences are inaudible) will be .8334, if audible differences between the two components are so slight that the listener can correctly identify the components only 60% of the time (p = .6). If audible differences were slightly larger and the listener can correctly identify the components 70% of the time (p = .7), then the probability of Type 2 error will be .5501, and so on. (The p values should be interpreted as the proportion of correct identifications the listener will provide when given an infinite number of trials under the conditions of the listening study, even if those conditions are not ideal for making correct identifications.)

- Log in or register to post comments