Nothing Is Real

It is a common put-down of audiophiles: "You're imagining things." But is this a meaningful criticism? Is there a real difference between "reality" and "illusion"? Or was Professor Dumbledore on to something? I have been interested in human perception almost as long as I have been working in magazines. This sound is something with which everyone in this room will be familiar: a 1kHz tone at –20dBFS. [Play 1kHz, –20dBFS sinewave tone] What I'd like you to do now is to imagine the same tone for 10 seconds. I believe a scan of your brain would show the same activity in both situations: with a "real" sound and with an "imaginary" sound. We can't directly experience reality; instead, our brain uses the input of our senses to construct an internal model that reflects that external reality, to a greater or lesser degree. So what is reality, what is the illusion? Internally, they are the same thing. That's why hallucinations are so unsettling—there is no way of knowing without further investigation that they don't correspond to anything in the outside world.

I am sure that some are shifting a little in their chairs, so I will demonstrate this conjecture with some music. A couple of years after the Abbey Road sessions I mentioned earlier, the band got back together to record an album for DJM Records. Here's a picture of us in 1974: three sharp-dressed men.

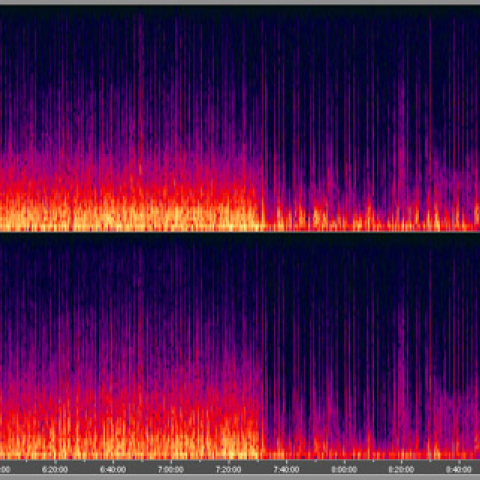

What is unusual is that none of this is real. There are no individual sounds of instruments being reproduced by the loudspeakers. Even though you readily hear them, there is no bass guitar, there are no drums, there is no lead vocalist. The external reality is that there are two channels of complex audio-bandwidth voltage information that cause two pressure waves to emanate from the loudspeakers. Everything you hear is an internal construct based on your culture and experience. The impression you get at 2:51 that someone is striking a match to light a cigarette at the right of the stage is something that exists only in your head, your brain back-interpolating from the twin pressure waves striking your ears that that must have been what happened at the original event.

I first heard this phenomenon described in a talk given by Meridian's Bob Stuart a quarter century ago, and it was discussed at length in Edmund Blair Bolles's A Second Way of Knowing: The Riddle of Human Perception (Prentice Hall Press, 1991). Your brain creates "acoustic models" as a result of the acoustic information reaching your ears. We do this so naturally—after all, it's what we do when our ears pick up real sounds—that it doesn't strike us as incongruous that the illusion of the sounds and spatial aspects of a symphony orchestra can be reproduced by a pair of speakers in a living room.

As with our experience of liquid water, the familiarity and apparent simplicity of perception hides depths of complexity. We just do it. Yet there is as of yet no measurement or set of measurements that can be performed on those twin channels of information to identify the sounds I have just described, and what you perceived with no apparent effort when you listened to that recording of my band.

So if the brain creates internal models to deal with what is happening in the "real" world, let's examine how those models work.

This internal modeling of reality is quirky. First, with visual stimuli, there is a latency of around 100 milliseconds while the brain processes new data. Visually, we experience the world as it existed a tenth of a second in the past. It has been proposed that we have evolved mechanisms to cope with that neural lag; in effect, our internal models predict what will occur one-tenth of a second in the future, which allows us to react to events in the present—such as catching a fly ball, or maneuvering smoothly through a crowd (footnote 2).

But certain situations can unmask that lag. Something that we must all have experienced is when we have glanced at a clock with a second hand or with a numeric seconds display: The first tick appears to take longer than subsequent ticks. But this isn't an illusion: the first tick does take longer—at least in your reality, as opposed to the clock's—because of the time required for the brain to accommodate new data into its model.

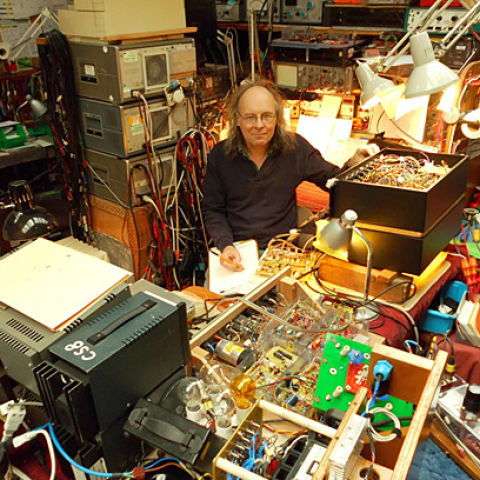

I remember discussing perception with Bob Berkovitz when I visited him at Acoustic Research in Boston, in the early 1980s. The conversation stuck in my mind because Bob, who was working with Ron Genereux on digital signal processing to correct room acoustic problems, defined audio as being "one of the few areas in which an engineer can work without the end product being used to kill people."

During that visit, Bob subjected me to a perceptual test. I sat in a darkened room with a red light flashing in the left of my visual field. At some point, Bob switched off the light on the left and turned on a similarly flashing red light on the right. The question is: What did I see?

The answer is not "A red light flashing on the left, then a red light flashing on the right."

What I saw was a flashing red light on the left that then slowly moved across my field of vision until it was on the right!

It was another moment of satori. The conflict between "reality" and what I perceived seemed to demonstrate that, once the brain has constructed an internal model, it is slow to change that model when new sensory data are received. The brain's latency in processing aural data is shorter than it is with visual data, but it still exists. Otherwise there wouldn't be the phenomenon of "backward masking," where a loud sound literally prevents you from hearing a quiet sound that preceded it.

Here's an audio example analogous to the clock's slower first tick with which everyone will be familiar. When you hook up a new component but with the channels reversed, at first, all you're aware of is that something is not quite right. The orchestral violins are on the left, as they should be, but their image wobbles, and is ambiguously positioned. You don't hear them on the right, where they now should be. Then, when you realize that Left=Right and vice versa, the imaging solidifies and is correctly heard as a channel-reversed image. The thought crystallizes the perception, not the other way around.

Although evolution has optimized the human brain to be an extremely efficient pattern-recognition engine that uses incomplete data to make internal acoustic models of the world, as this example suggests, that same evolutionary development has major implications when it comes to the thorny subject of sound quality.

Footnote 1: Following the lecture, I read in Steven Levy's 1984 book Hackers: Heroes of the Computer Revolution that students at MIT in the 1950s programmed a robot arm to catch a ball, but I don't have any further information on this. Also, on how a fielder manages to catch a ball, I am told that as part of the successive approximation process I describe, he adjusts his position to keep a constant angle between the ball and his eye. Footnote 2: See, for example, the essay at www.luminous-landscape.com/tutorials/what_we_see.shtml.

It is a common put-down of audiophiles: "You're imagining things." But is this a meaningful criticism? Is there a real difference between "reality" and "illusion"? Or was Professor Dumbledore on to something? I have been interested in human perception almost as long as I have been working in magazines. This sound is something with which everyone in this room will be familiar: a 1kHz tone at –20dBFS. [Play 1kHz, –20dBFS sinewave tone] What I'd like you to do now is to imagine the same tone for 10 seconds. I believe a scan of your brain would show the same activity in both situations: with a "real" sound and with an "imaginary" sound. We can't directly experience reality; instead, our brain uses the input of our senses to construct an internal model that reflects that external reality, to a greater or lesser degree. So what is reality, what is the illusion? Internally, they are the same thing. That's why hallucinations are so unsettling—there is no way of knowing without further investigation that they don't correspond to anything in the outside world.

The Obie Clayton Band (L–R): John Atkinson, Michael Cox, Alan Eden

Baggies and platform shoes were mandatory in 1974, otherwise Mark Knopfler wouldn't have had anything to rail against in Dire Straits' "Sultans of Swing." Here's a needle-drop of a track from our LP, which was released in 1975, engineered by Jerry Boys (of subsequent Buena Vista Social Club fame), produced by Tony Cox at Sawmills Studio, and mastered by George Peckham. (Yes, it was a "Porky Prime Cut.") I am playing bass guitar, I'm one of the backing vocalists, and I supply the choir of clarinets in the bridge.

[Play Obie Clayton Band: "Blues for Beginners," needle drop from Obie Clayton LP, DJM DJLPS 458 (1975)]

Think about what you've just heard. I mentioned bass guitar, vocals, and clarinets. There is also a lead singer, a piano, guitars, drums, a harmonica. What's so unusual about that?

Live from the 131st AES Convention: JA throws a baseball for Stephen Mejias to catch.

I was at a Mets game a few years ago, thinking how difficult it is for an outfielder to catch a pop-up, given that when the ball leaves the bat, the fielder has almost no data with which to calculate where the ball will land. I was reminded of something Barry Blesser wrote in the October 2001 issue of The Journal of the AES (p.886). "The auditory system . . ." Blesser wrote, "attempts to build an internal model of the external world with partial input. The perceptual system is designed to work with grossly insufficient data."

Catching a ball illustrates Blesser's point, not just about the auditory system's but also the visual system's ability to use incomplete information. At first the fielder has very little info on which to create a model of the ball's trajectory. Certainly there is not enough information to program a robot to catch the ball (footnote 1). The robot needs to use math. By contrast, the fielder's brain continually updates the model with new information—a process of successive approximation, if you will—until, plop, the ball lands in his glove.

Footnote 1: Following the lecture, I read in Steven Levy's 1984 book Hackers: Heroes of the Computer Revolution that students at MIT in the 1950s programmed a robot arm to catch a ball, but I don't have any further information on this. Also, on how a fielder manages to catch a ball, I am told that as part of the successive approximation process I describe, he adjusts his position to keep a constant angle between the ball and his eye. Footnote 2: See, for example, the essay at www.luminous-landscape.com/tutorials/what_we_see.shtml.