| Columns Retired Columns & Blogs |

Very interesting reading.

There's far too much to comment upon here. Not the time or place... (Not to correct, but to ask for expansion. More of this would be great!)

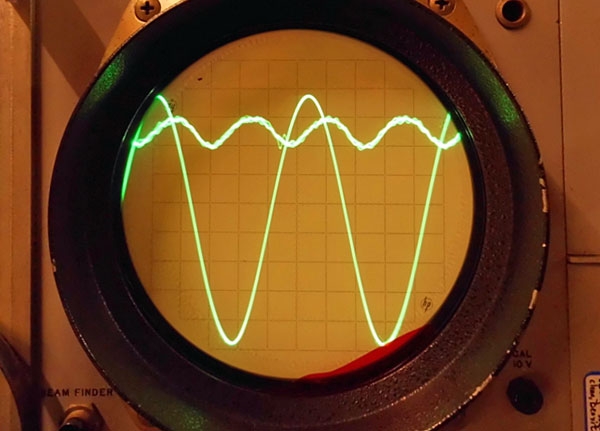

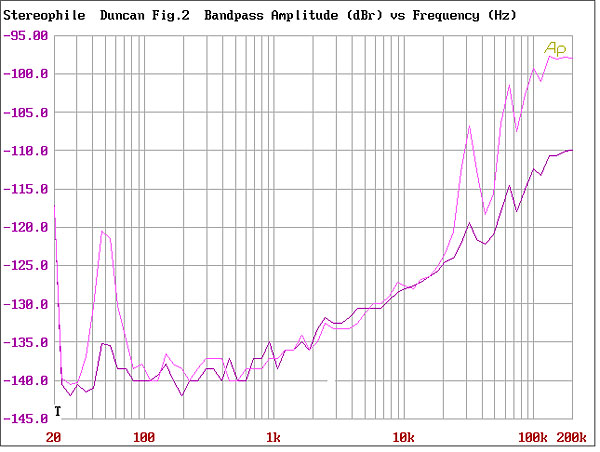

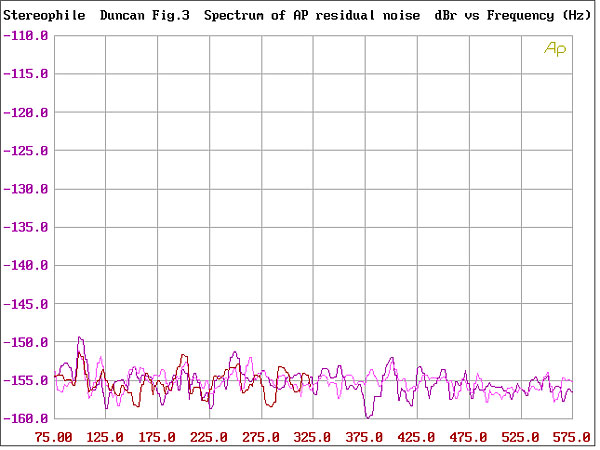

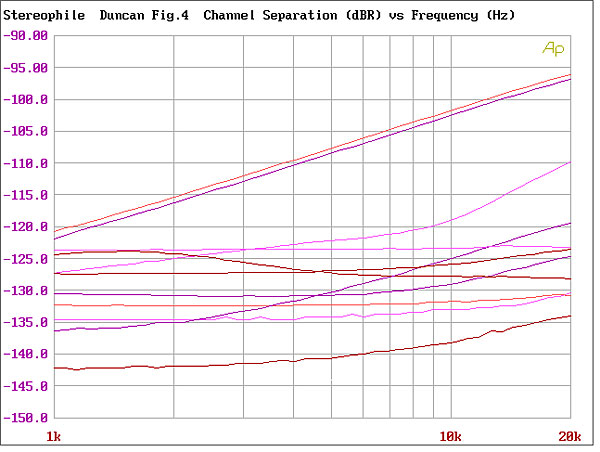

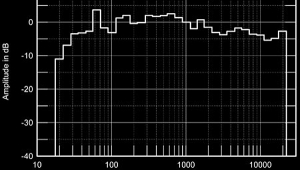

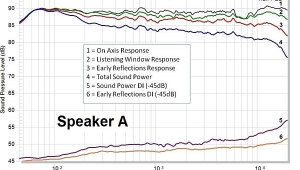

One comment I will make. As Mr. Duncan pointed out, IM products tend to rise and fall over real time as the relative phases of the contributing signal components change. Other effects, such as thermal changes, also create different distortion patterns over time. This is why averaging the spectrum can be misleading.

If you look at the distortion spectrum over time with a more or less real time spectrum analyzer, you can see these peaks changing. The distortion products when averaged look like bumps in the noise floor. But, if you zoom in, they're more like fields of grass swaying in the wind. This isn't unique to audio systems - you can see the same thing with various RF systems as well.

What you really hear in an audio system are the peaks of each distortion product as they change over time, not so much the averaged signal power. Listening to plain old noise is a constant change of spectral content and levels. You can hear that.

One immediate burning question - why do these design guys disappear into the aether so often?