| Columns Retired Columns & Blogs |

Professional Headroom

When I first started buying records at the end of the 1950s, I had this vision of the typical recording engineer: A sound wizard wearing a white lab coat rather than a cloak festooned with Zodiacal symbols. He (it was always a "he," of course) would spare no effort, no expense to create a disc (LPs and 45s were all we had) that offered the highest possible sound quality. At that time I also believed that Elvis going into the Army meant the end of rock'n'roll, that my teachers knew everything, that politicians were honest, that socialism was the best form of government, and that talent and hard work were all you needed to be a success. Those ideas crashed and burned as I grew up, of course, but other than the long-discarded white coats, each new record I bought strengthened rather than weakened my image of the recording engineer.

Footnote 1: This is not meant to be a definitive list. If I've left out someone you admire, write to let me know.

And with good reason—giants walked the studios in the '50s and '60s. Although their names were almost never found on LP sleeves, Bob Fine at Mercury, Rudy Van Gelder at Blue Note, Bill Porter and Lewis Layton at RCA, Kenneth "Wilkie" Wilkinson, Arthur Haddy, Gordon Parry, and Christopher Raeburn at Decca/London, Christopher Parker, Geoff Emerick, and Norman Smith at EMI, Larry Levine with Phil Spector, Glyn Johns, Eddie Kramer, Bruce Botnick, George Chkiantz, Wally Heider, Phil Ramone, Roy Halee, and many others turned out a never-ending succession of recordings with high-quality sound, each one sounding as good if not better than the previous one (footnote 1).

But a wrong turn was taken somewhere in the '70s, even as the hi-fi market underwent its biggest period of growth and the High End sprang into existence. Maybe it was the influence of the cassette, where the concept of quality was almost irrelevant by definition. Or maybe it was the growth of the bean-counter mentality at record companies, where "good enough" replaced "as good as possible." Whatever, the quality of each album I bought became a crapshoot: Not only did the sound of each new LP not improve on the last one I bought, most of the time it sounded worse.

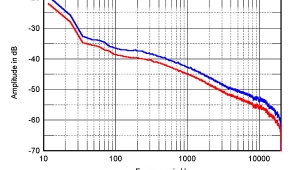

I was reminded of this both when I attended the 1996 Audio Engineering Society Convention last November—you can read RH's and TJN's reports in this month's "Industry Update" (pp.39-51)—and by Bob Ludwig's comments in this month's "Letters" column (p.11). When the specification for the CD was fixed in 1979, it was felt that 16-bit word lengths and a 44.1kHz sample rate were good enough for a consumer medium. And they almost are—provided that the full dynamic range is used and nothing is done to those data between the time they're created and when they're played back. As long as that is the case, the digital artifacts of a "Red Book"-specification CD will be just below the audible threshold over all the audio band (other than in the mid-treble, where the ear is most sensitive).

But, of course, this is almost never the case. The problem with 16-bit digital audio is that the minute something is done to the data—level changing, mixing, equalization, compression—resolution is lost unless the data are optimally redithered back to 16-bit words, and that is also almost never the case. With a professional recording format with the same word depth as the consumer medium, there is no "professional headroom," to use a term I first heard used by Decca's Bill Bayliff almost two decades ago (footnote 2). But when the original recording format is of much higher resolution than the consumer format—as was the case in the golden age I referred to above—then the engineer can do all he needs to do to the data, secure in the knowledge that, at the mastering stage, as much quality can be transferred to the delivery medium as it can hold.

From what I saw at the AES Convention, modern recording is almost never like that. Yes, there are a few digital engineers—like Tony Faulkner in the UK, Bob Katz at Chesky, Jerry Bruck at Posthorn Recordings, Craig Dory at Dorian, and David Smith's team at Sony Classical—who adhere to the old paradigm of keeping the resolution as high as possible for as long as possible. (With appropriate respect paid to those professionals, it is also the philosophy I adhere to in the production and engineering of Stereophile's recordings.)

At the AES, companies like Nagra, dCS, PrismSound, Apogee Electronics, Benchmark, and Sonic Solutions introduced new tools for such old-school engineers to do things the high-quality way. But in his letter, Bob Ludwig reports that producers still send him "over 99% of their digital masters on 16-bit, 44.1kHz DAT." And as fellow mastering engineer Steve Hall of Future Disc Systems recently said, "If someone comes in with a 16-bit DAT, the destruction has already been done....they have pretty well deteriorated the signal to its least usable form." (footnote 3)

If that is the case, all the engineer can do is try to conceal that damage. One famous engineer at AES, for example, said that when he records rock to a 16-bit digital multitrack, he routinely mixes down to analog tape to smooth over the digital nasties, then reconverts to digital for the final master. And you wonder why so many CDs sound the way they do! As Chip Stern said in last month's review of the Mesa Baron amplifier, "You can't polish a turd." (footnote 4)

Larry Archibald reports in this issue's "The Final Word" (p.242) his reaction to comparing the 20-bit Nagra-D master of our Sonata recording with the 16-bit, noise-shaped CD and the test pressings of the LP, due to be released next month. I feel we squeezed as much quality out of the consumer media as possible, and I am proud of their sound. But if only you could hear the original! That is what excites me about DVD—at last, audiophiles would have a source equal to the demands of the best playback systems!

Footnote 1: This is not meant to be a definitive list. If I've left out someone you admire, write to let me know.

Footnote 2: See "Practical Digital Recording," Hi-Fi News & Record Review, August 1979.

Footnote 3: Mix, December 1996, p.66.

Footnote 4: Stereophile, January 1997, p.165.

- Log in or register to post comments