| Columns Retired Columns & Blogs |

The Highs & Lows of Double-Blind Testing Page 4

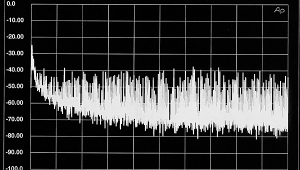

Thus, with a 16-trial listening test analyzed at the conventional .05 level of significance, the probability of the investigator overlooking differences so subtle that the listener can correctly identify them only 60% of the time is a whopping .8334! Accordingly, when true differences between components are subtle, it is not surprising that 16-trial listening tests with (or without) thc ABX comparator typically fail to find them.

Footnote 2: It's this kind of detailed "disclosure statement" (he calls it an "error budget") that Anthony H. Cordesman has been demanding from the A-B advocates. In other words, all statistical trials and analyses run certain risks of error; in real statistics, these risks are specified so that subsequent examiners can evaluate the test's validity.—Larry Archibald

What if 50 trials are run? The table shows that the investigator must require the listener to make 32 or more correct identifications (r = 32) to conclude that differences are audible at the .05 level of significance. With an r of 32, the probability of concluding that inaudible differences are audible (Type 1 error) is .0325 and the probability of concluding that audible differences are inaudible (Type 2 error) when the listener can make correct identifications 60% of the time is .6644. Even with as many as 100 trials, analyzing data at the .05 significance level will result in a probability as high as .3775 for overlooking such subtle differences. Thus, for subtle differences, a very large number of trials is necessary to bring down Type 2 error risk to acceptable limits when Type 1 error is limited to .05.

Another way to bring down Type 2 error risk is to allow Type 1 error risk to increase. For example, in a 16-trial study, reducing r from 12 to 9 will increase Type 1 error from .0384 to .4018 but will reduce Type 2 error, when p is .6, from .8334 to .2839. With an r of 9, most of the 16-trial listening tests in Audio would result in statistically significant results! The trouble is, of course, that the decreased risk of overlooking audible differences (Type 2 error) was purchased at the price of an increased risk of identifying inaudible differences as audible (Type 1 error).

The above illustrates how Type 1 and Type 2 error risks can be traded off for each other, which raises the question of how large one error risk should be relative to the other. (This is a complex issue dealt with in my above-referenced paper, so I will not trouble your migraine with it now.)

The unavoidable conclusion from all this is that, if one intends to employ the .05 significance level to determine whether differences are audible, then conducting a small-trial listening test in order to find true subtle differences between components will result in an unacceptably high risk of overlooking those differences. This amounts to an unacceptably low probability of finding them. (Incidentally, the probability of finding an audible difference, referred to as "power," equals 1 minus the probability of Type 2 error. Small-N listening tests are said to have less power than large-N tests.)

I would suggest trying the ABX comparator in large-trial listening tests. How large? Well, you decide what. risks you are willing to accept for Type 1 and Type 2 error, and then consult the table to determine the required number of trials. Be sure to report those error risks to your readers (footnote 2).

By the way, what do you think the Type 1 and Type 2 error risks are for your conclusions about audible differences arising from your conventional, non-double-blind listening tests? I will wager you do not know, which illustrates one o.f the main advantages of conducting a statistical analysis of one's data, namely, one can determine the probability of being wrong. Or don't you make mistakes? (One does not need to run a double-blind test to conduct a statistical analysis of one's data. But data generated by a double-blind test (ie, number of correct identifications out of a given number of attempts) are easily subjected to statistical analysis.

On another matter, I agree with Mr. Archibald that J. Gordon Holt missed some of the points raised by reader Huss. Mr. Holt stated that consistencies in independently prepared reports from several reviewers "pretty much rule out prejudices or self-deception"; hence, double-blind tests are not necessary. Research methodologists know that prejudice and self-deception are not so easily ruled out by employing independent reviewers. The reason is that, while reviewers may listen to and report on equipment without consulting or communicating with each other, there may nevertheless be commonalities among them which lead them to make similar errors. For example, independent reviewers may have similar expectations (eg, they may expect tube electronics to sound less bright) or similar preferences (eg, they may like, or think they like, tube equipment). Similarity of reviews from "independent" reviewers is consistent not only with Mr. Holt's interpretation that the review reveal the truth about components, but with another interpretation: the reviewers are all mistaken, the similarity having been produced by, say, similar expectations. Simply stated, similarity of reviews may result merely from similarity of biases.

Footnote 2: It's this kind of detailed "disclosure statement" (he calls it an "error budget") that Anthony H. Cordesman has been demanding from the A-B advocates. In other words, all statistical trials and analyses run certain risks of error; in real statistics, these risks are specified so that subsequent examiners can evaluate the test's validity.—Larry Archibald

- Log in or register to post comments