| Columns Retired Columns & Blogs |

The Blind Leading the Blind? Letters, October 2005

Letters in response appeared in October 2005 (Vol.28 No.10):

Curious

Editor: There was a curious juxtaposition of editorial content and advertising in the August 2005 Stereophile. Page 3 has Jon Iverson discussing the problems of double-blind testing, and concluding that such testing can identify only who has the best ears. Yet then, on p.7, Revel, a respected high-end speaker manufacturer, talks about how their new and most affordable line of speakers were "subjected to the ultimate test—double-blind listening." Are we to believe Mr. Iverson or Revel? Does the editorial half of Stereophile know what gems are being published by the advertising half?—Joe Sutherland, joesutherland@sprintmail.com

Amazing

Editor: It is amazing how these things come back around after all these years. The letters generated by the "Blind Listening Test" debate rage on, and yet there exists one indisputable fact, which I mentioned in a handwritten letter to Stereophile 16 years ago (never published, probably because I rambled on back then). When I first read the debate in the forms of letters, responses, and editorials in these pages 16 years ago, I wondered how this fact could escape the editorial staff. I wondered again today, after reading Jon Iverson's August "As We See It," how it could possibly be that I have never heard anyone at Stereophile mention the word validation.

It just escapes me that no one has ever asked the question (at least in print): Where are the positive controls? When has a blinded listening test ever been shown to consistently demonstrate a difference? Any good test must be validated before it can produce any meaningful information. Have blind listening tests ever been validated? Can anyone show us a positive control for blind listening tests? Anyone? Anywhere? Will anyone anywhere who has knowledge of a blind-listening-test validation point the rest of us to that reference?

When are the kind folks (and we know they are kind) who wish to judge equipment via blind listening tests ever going to come up with a positive control and validate this test?! As soon as we have this accomplished, perhaps we can then use the test for something besides reams of wasted opinion. If it can't be done, then let's just drop it as the same kind of poor judgment that leads us back to tequila despite the previous hangover. As of now, blind listening tests have demonstrated only one thing: They are unreliable at testing for differences.—John Markley, MD, Columbia, MO, jgm@mchsi.com

Diatribes

Editor: Jon Iverson's diatribe against blind listening tests ("The Blind Leading the Blind?," August 2005, p.3) is yet another example of the high-end audio community's phobic reaction against truly objective testing.

Blind testing, and the more rigorous double-blind testing, in which both the reviewer and the administrator of the test do not know the identity of the object under test, are the basis of the most trustworthy forms of experimental design. As an example, the FDA requires pharmaceutical research, where possible, to conduct all clinical trials under a double-blind protocol. The foundations of probability sampling, hypothesis testing, and the analysis of variance that are used to determine whether two or more objects are really different, as developed by Ronald Fisher beginning in 1924, were one of the major accomplishments in statistical science in the 20th century.

But the audio industry and audio press are afraid of truly objective testing because of what it might disclose—expose might be the better verb. It is analogous to the "theological" debate that some sectors of society have against evolution. Although the preponderance of scientific evidence proves it is true, religionists refuse to believe it because, in doing so, it calls into question the existence of God. In the case of high-end audio, the fear discloses the poor relationships among cost, engineering competence, and actual performance.

Iverson's riposte undermines the method by intentionally designing bad experiments. Iverson notes (correctly) that because different listeners have different levels of discrimination, the results of blind testing are suspect. But the point of testing is not to test the tester, but to test the object! The first step in designing a proper blind test is to assemble a panel of testers who have the same discriminatory power, and then to submit the object to this prequalified panel. Only this way can the analysis of variance distinguish differences in the quality of the objects from the quality of the reviewing panel.

The bottom line is that if no differences can be heard between two objects in a properly conducted blind test, then their performance quality (of lack thereof) is the same. It doesn't matter whether one object is produced by a "darling" of the high-end community and the other by some anonymous corporate assembly line; it doesn't matter whether one object uses some exotic technology and the other uses off-the-shelf componentry; it doesn't matter whether one object retails for $5000 and the other for $50,000. Most important, it doesn't matter whether one object advertises in the audio press reporting the test and the other does not.—Ron Levine, Philadelphia, PA, levineresearch@aol.com

Mr. Levine and Dr. Markley, we must simply let go of the belief that blind audio tests reveal any objective information about audible differences between components. This is because such tests are fundamentally subject to the listener's abilities. However, blind tests can be used for an audiophile Olympics. Mr. Levine notes, "The first step in designing a proper blind test is to assemble a panel of testers who have the same discriminatory power, and then to submit the object to this prequalified panel." And I'm suggesting that blind audio tests are a great way to create this panel of superlisteners. We can pit the Indonesian panel against the German panel. In fact, we can identify the single most highly trained and sensitive listener on the planet.

But here's the thing: If that listener or panel then failed a series of blind audio tests involving, say, two brands of speaker cables, would we therefore conclude that there are no audible differences? And what happens if, five years later, a new champion or panel takes the same tests and passes? Do we now agree that there are audible differences?

Sorry, this is not a sufficiently objective test of equipment, and brings me back to my original point: The only thing a blind audio test can help you conclusively determine is the ability of the listener(s). That's it. I agree with Dr. Markley that blind audio tests must first be validated, but obviously I don't think they ever will be. These tests are not useful enough to satisfy my objective streak, and the audio equipment in the room simply becomes the apparatus being used to test the humans.

I do not condemn blind testing in other fields, such as medical science. Let's start by pointing out again that all of us can learn to improve our scores on blind audio tests. If we could do the same thing with medical blind trials, they would be considered worthless. But blind audio tests engage different attributes of a human being than does the typical medical drug trial. Listening is both an innate ability and a skill that, as in an Olympic sport, can be enhanced with proper training. Practice improves your ability to score well in blind audio tests; as far as I'm aware, this is not true with blind drug studies. You cannot train some humans to react differently and therefore skew the drug studies based on who was used in the panel. Would Mr. Levine propose a superpanel of drug takers for testing medicine, as is suggested for audio components?

Let's face it: Whatever differences that do or do not exist between two components are fixed and, in some cases, can even be measured objectively beforehand. But a blind listening test reveals only who among us can detect differences to various degrees of subtlety. Fun to play around with, yes, but useless for my objectivist needs.—Jon Iverson

Pandering

Editor: Jon Iverson's supposedly heartfelt diatribe against blind product comparisons (August 2005) smells more like dutiful pandering to Stereophile's advertisers. Bet they hate comparisons that might show up one product as better than its competitors. Stereophile loves the subjective response to audio gear—but here's Iverson arguing that blind comparisons are bad because they might expose some subjective evaluations as being worthless, even embarrassing.

Tell us, please: What percentage of its revenue does Stereophile get from readers, and what percentage from advertisers? Which is its most important constituency?—Bill Murphy, Sacramento, CA, billmurphy@pacbell.net

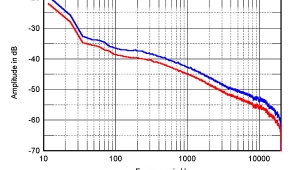

With all due respect, Mr. Murphy, you couldn't be more wrong. The reason I don't like blind audio tests is that they give everyone too much wiggle room, advertisers and critics alike. I much prefer the kind of tests John Atkinson did on the Harmonic Technology cables in the August issue, which were balanced against MF's subjective impressions. This approach brings us both sides of the story. By contrast, blind audio tests can easily be rigged to prove the points of manufacturer or critic. At the end of the day, however, they help us measure only the listening skills of the listener, not the performance of a product, whether good or bad. They bring us a highly suspect story. I'm sure we both want more.—Jon Iverson

- Log in or register to post comments