| Columns Retired Columns & Blogs |

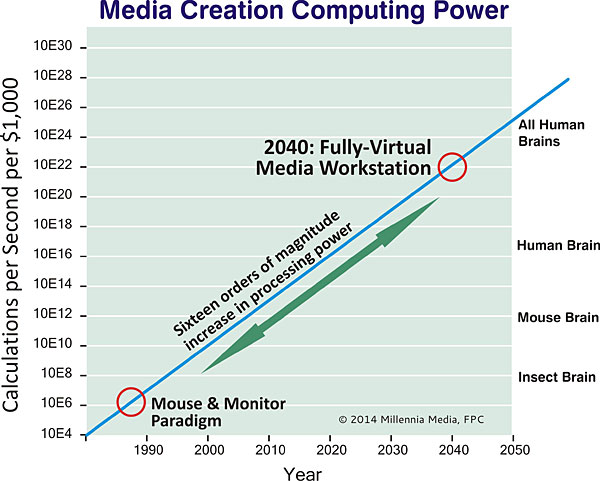

The "progress" is no surprise, but the thought of the constant stream of expenditures to replace what will no longer work, or no longer be "supported", or just to keep up with everyone else really isn't all that attractive! There is such a rush to get everything to market before it obsoletes or loses sales appeal nowadays that nothing works properly and everything is patched on top of patches and then all too soon has to be replaced entirely - long before "they" even get it right in the first place. I just hope those gimmicky free-air gestural controls understand emphatic one-fingered gestures when the control interfaces work as poorly as the mechanical ones do now to let the designers know what we think of the half-baked breakware and horrendously poorly thought-out controls they keep sticking us with.

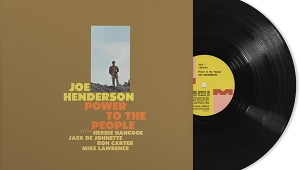

I'm just glad I stuck with the still-best audio technology around for stability - my vinyl gear - while a constant revolving-door stream of that wonderful new digital stuff keeps coming and going through my listening room as it obsoletes every few months. Technology has become so terrific that the freshest breath of air is ironically what does not have to be replaced so darn often! That's what has become a really a novel pleasure anymore. Call me old if you like, but I yearn for the stability of those days when things didn't change so much. Dynamics are wonderful, but at near 120 dB, we will have as much as we can possibly need...to go deaf if that's sustained. Broke, too.