"What Happened to the Negative Frequencies?"

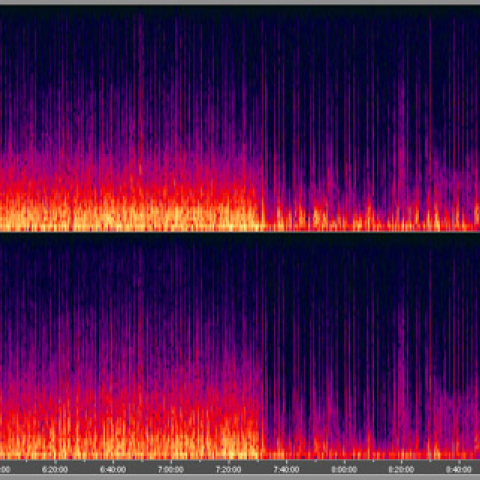

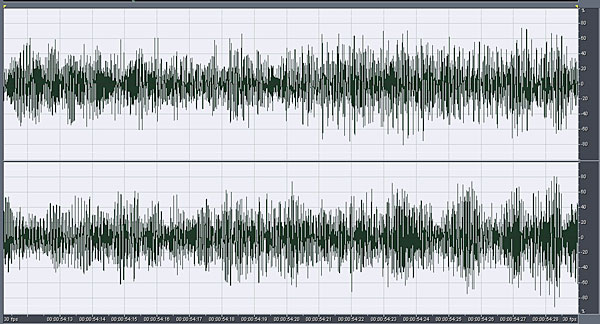

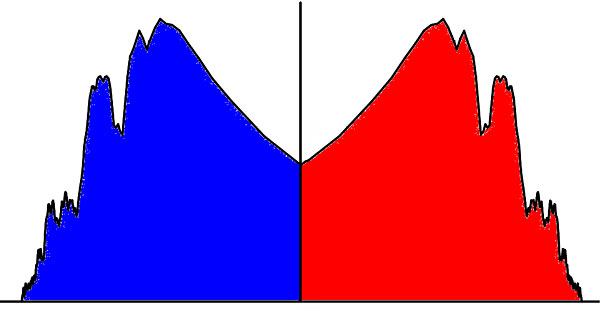

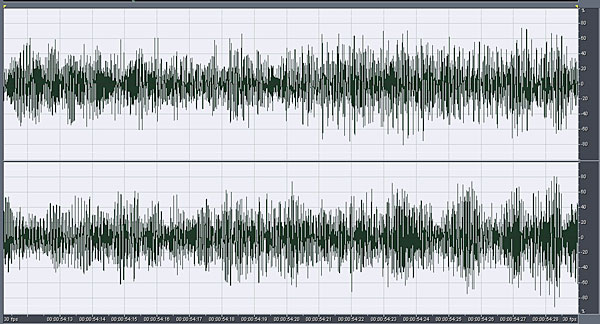

Nothing happened to them, of course, as I will show, but you mustn't forget that they are always there. Everyone in this room will be familiar with the acronym "FFT." The Fast Fourier Transform is both elegant and ubiquitous. It allows us to move with ease between time-based and frequency-based views of audio events. You will all be familiar with the following example. Here is the waveform of a short section of a piece of music: And here is the spectrum of that music:

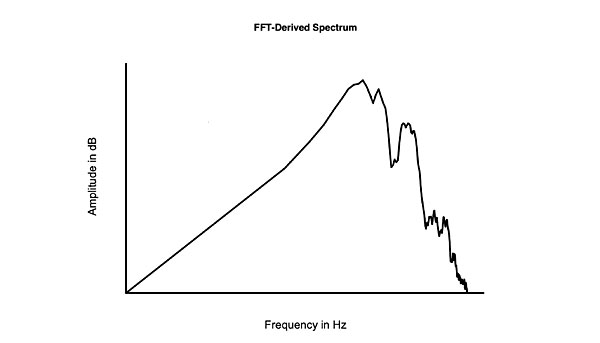

And here is the spectrum of that music:

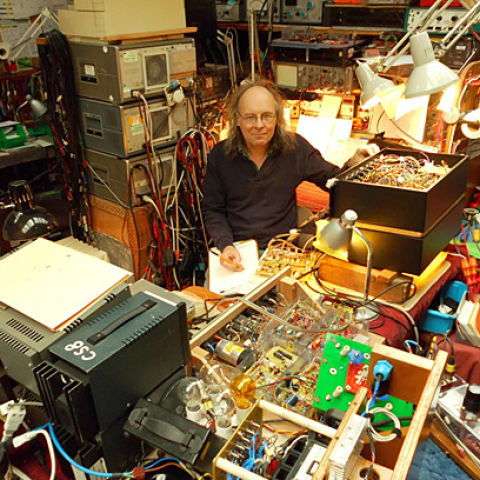

This usefulness of the FFT algorithm—or, more properly, the Discrete Fourier Transform—is everywhere you look in modern audio. If all we had to use were the tubed wave analyzers of my university lab, a life in audio would be very different and very difficult. But I don't like to use tools without understanding how they work—my physics lecturer at university used to yell that we must always try to examine matters from first principles—so in 1981, when got my first PC, a 6502-based BBC Model B, I wrote a BASIC program to perform FFTs, based on an algorithm I found in a textbook. (The computer took around five minutes to perform a 512-point FFT—debugging the program took forever!) This was the process I followed in that program:

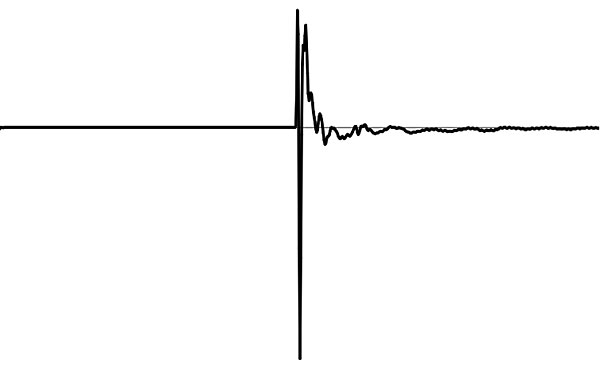

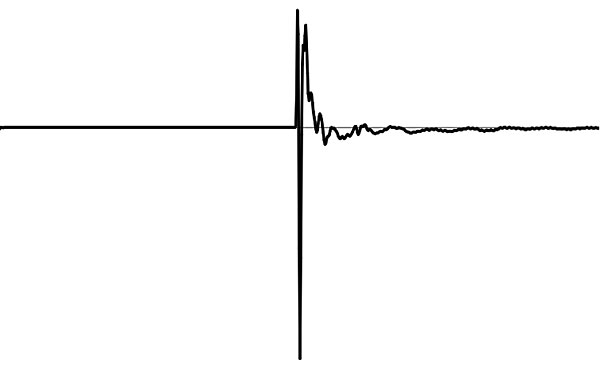

1) Capture the discrete-time impulse response of the system

This usefulness of the FFT algorithm—or, more properly, the Discrete Fourier Transform—is everywhere you look in modern audio. If all we had to use were the tubed wave analyzers of my university lab, a life in audio would be very different and very difficult. But I don't like to use tools without understanding how they work—my physics lecturer at university used to yell that we must always try to examine matters from first principles—so in 1981, when got my first PC, a 6502-based BBC Model B, I wrote a BASIC program to perform FFTs, based on an algorithm I found in a textbook. (The computer took around five minutes to perform a 512-point FFT—debugging the program took forever!) This was the process I followed in that program:

1) Capture the discrete-time impulse response of the system

My FFT satori was to realize that you needed to restrict, to window, the impulse response, then stitch its end to its beginning.

My FFT satori was to realize that you needed to restrict, to window, the impulse response, then stitch its end to its beginning.

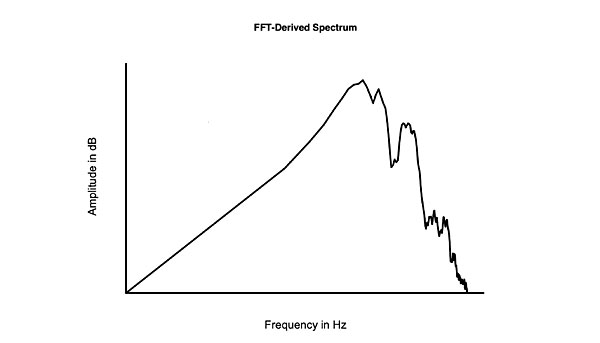

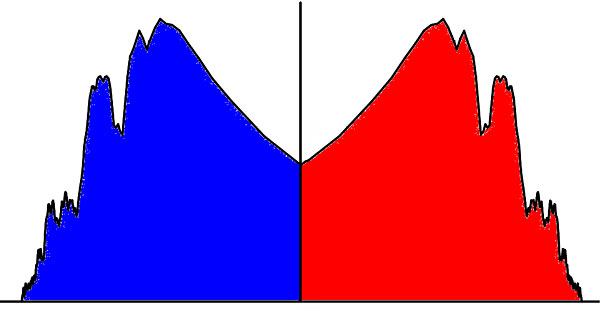

Now you have a continuous wave with a fundamental frequency equal to the reciprocal of the length of the windowed impulse, and the FFT algorithm gives the frequency-domain equivalent of that continuous waveform. However, this was the spectrum I obtained from that program:

Now you have a continuous wave with a fundamental frequency equal to the reciprocal of the length of the windowed impulse, and the FFT algorithm gives the frequency-domain equivalent of that continuous waveform. However, this was the spectrum I obtained from that program:

You get two spectra: one with positive frequencies, corresponding to ei (angular frequency), the other with the same amplitudes of the same frequencies but with a negative sign, corresponding to e–i. You can visualize this as the spectrum being symmetrically "mirrored" on the other side of DC. The negative spectrum is discarded—or, more strictly, you extract the real part of what is a complex solution, and subsequently work with the modulus of the spectrum; ie, the sign is ignored.

You get two spectra: one with positive frequencies, corresponding to ei (angular frequency), the other with the same amplitudes of the same frequencies but with a negative sign, corresponding to e–i. You can visualize this as the spectrum being symmetrically "mirrored" on the other side of DC. The negative spectrum is discarded—or, more strictly, you extract the real part of what is a complex solution, and subsequently work with the modulus of the spectrum; ie, the sign is ignored.

But note the assumptions you have made: 1) the fabricated continuous wave extends to ±Infinity, which is untrue—even a Wagner opera has to eventually end—and 2) what happens at the point where the end of the impulse response is stitched to the beginning? What if there is a discontinuity at that point that mandates you having to apply some sort of mathematical "windowing" function to remove the discontinuity from the impulse response data? (Programs using FFTs should have a "Here Lie Monsters" pop-up when you apply the transform before you've checked that you're using the right window for your intended purpose.) And you have made a value judgment only to use the positive frequencies. While having done so will not matter if, for example, you are concerned only with the baseband behavior of a digital system, it will matter under different circumstances—as I will show when I get on to digital systems.

Note also that the frequency resolution of the spectrum is directly related to the length of the time window you used. If that window is 5 milliseconds in length, the datapoints in the transformed spectrum are spaced at 200Hz intervals, which is not a problem in the treble but a real problem in the midrange and bass.

The title of my lecture is therefore a metaphor: You cannot assume that the assumptions you make as an engineer will be appropriate under all circumstances. You almost need to know the result of a calculation before you perform it.

Science: A Digression

But note the assumptions you have made: 1) the fabricated continuous wave extends to ±Infinity, which is untrue—even a Wagner opera has to eventually end—and 2) what happens at the point where the end of the impulse response is stitched to the beginning? What if there is a discontinuity at that point that mandates you having to apply some sort of mathematical "windowing" function to remove the discontinuity from the impulse response data? (Programs using FFTs should have a "Here Lie Monsters" pop-up when you apply the transform before you've checked that you're using the right window for your intended purpose.) And you have made a value judgment only to use the positive frequencies. While having done so will not matter if, for example, you are concerned only with the baseband behavior of a digital system, it will matter under different circumstances—as I will show when I get on to digital systems.

Note also that the frequency resolution of the spectrum is directly related to the length of the time window you used. If that window is 5 milliseconds in length, the datapoints in the transformed spectrum are spaced at 200Hz intervals, which is not a problem in the treble but a real problem in the midrange and bass.

The title of my lecture is therefore a metaphor: You cannot assume that the assumptions you make as an engineer will be appropriate under all circumstances. You almost need to know the result of a calculation before you perform it.

Science: A Digression

I referred earlier to using tubes in my engineering education; pocket calculators were not introduced until after I graduated from university, so my constant companion back then was my slide rule. My attitude to science was conditioned by my trusty slide rule. Slide rules are elegance personified: not only do you need to have an idea of the order of the answer before you perform the calculation, a slide rule prevents you from getting hung up on irrelevant decimal places in that answer. And you can't forget that, even when you obtain an answer, it is never absolute, but merely a useful approximation.

In physics, you learned to believe one impossible thing before breakfast every day. The strangeness starts when you learn that a coffee cup with a handle and the donut next to it are topologically identical. When you learn that a stream of electrons fired one at a time at a pair of slits in a barrier create the same interference pattern as if they had all arrived simultaneously. And by the time you get to string theory, it's all strange: if you read Leonard Susskind's books, you will find that string theory predicts that every fundamental particle in the universe is represented by a Planck-length tile on the surface of the universe, and that the surface area of the universe just happens to be exactly equal to the sum of the areas of those tiles. Nothing in audio is that strange!

However, when Susskind writes things like "quantum gravity should be exactly described by an appropriate superconformal Lorentz invariant quantum field theory associated with the AdS boundary," my eyes glaze over, and I reach for that donut and coffee I mentioned earlier. But when you study physics, deep down you grasp that "Science" never provides definitive answers, or even proof. Naomi Oreskes and Erik M. Conway wrote in their 2010 book, Merchants of Doubt: "History shows us clearly that science does not provide certainty. It does not provide proof. It only provides the consensus of experts, based on the organized accumulation and scrutiny of evidence."

And that evidence is open-ended. Even with that scrutiny, there are always the outliers, the things that don't fit, that are brushed aside. I am reminded of the old story, which I believe I first heard from Dick Heyser, of the drunk looking for his keys under a street lamp. A passerby joins in the search, and after a fruitless few minutes, asks where the drunk has dropped them. "Over in the bushes," answers the drunk, "but it's too dark to look there."

The philosopher Karl Popper said, "Science may be described as the art of systematic oversimplification." This as true in audio as it is in science: As Richard Heyser explained in 1986, when it comes to correlating what is heard with what is measured, "there are a lot of loose ends!" It is dangerous to be dismissive, therefore, of observations that offend what we would regard as common sense. In Heyser's words, "I no longer regard as fruitcakes people who say they can hear something and I can't measure it—there may be something there!"

Which brings me to the subject of testing and listening

My attitude to science was conditioned by my trusty slide rule. Slide rules are elegance personified: not only do you need to have an idea of the order of the answer before you perform the calculation, a slide rule prevents you from getting hung up on irrelevant decimal places in that answer. And you can't forget that, even when you obtain an answer, it is never absolute, but merely a useful approximation.

In physics, you learned to believe one impossible thing before breakfast every day. The strangeness starts when you learn that a coffee cup with a handle and the donut next to it are topologically identical. When you learn that a stream of electrons fired one at a time at a pair of slits in a barrier create the same interference pattern as if they had all arrived simultaneously. And by the time you get to string theory, it's all strange: if you read Leonard Susskind's books, you will find that string theory predicts that every fundamental particle in the universe is represented by a Planck-length tile on the surface of the universe, and that the surface area of the universe just happens to be exactly equal to the sum of the areas of those tiles. Nothing in audio is that strange!

However, when Susskind writes things like "quantum gravity should be exactly described by an appropriate superconformal Lorentz invariant quantum field theory associated with the AdS boundary," my eyes glaze over, and I reach for that donut and coffee I mentioned earlier. But when you study physics, deep down you grasp that "Science" never provides definitive answers, or even proof. Naomi Oreskes and Erik M. Conway wrote in their 2010 book, Merchants of Doubt: "History shows us clearly that science does not provide certainty. It does not provide proof. It only provides the consensus of experts, based on the organized accumulation and scrutiny of evidence."

And that evidence is open-ended. Even with that scrutiny, there are always the outliers, the things that don't fit, that are brushed aside. I am reminded of the old story, which I believe I first heard from Dick Heyser, of the drunk looking for his keys under a street lamp. A passerby joins in the search, and after a fruitless few minutes, asks where the drunk has dropped them. "Over in the bushes," answers the drunk, "but it's too dark to look there."

The philosopher Karl Popper said, "Science may be described as the art of systematic oversimplification." This as true in audio as it is in science: As Richard Heyser explained in 1986, when it comes to correlating what is heard with what is measured, "there are a lot of loose ends!" It is dangerous to be dismissive, therefore, of observations that offend what we would regard as common sense. In Heyser's words, "I no longer regard as fruitcakes people who say they can hear something and I can't measure it—there may be something there!"

Which brings me to the subject of testing and listening

Nothing happened to them, of course, as I will show, but you mustn't forget that they are always there. Everyone in this room will be familiar with the acronym "FFT." The Fast Fourier Transform is both elegant and ubiquitous. It allows us to move with ease between time-based and frequency-based views of audio events. You will all be familiar with the following example. Here is the waveform of a short section of a piece of music:

And here is the spectrum of that music:

And here is the spectrum of that music:

This usefulness of the FFT algorithm—or, more properly, the Discrete Fourier Transform—is everywhere you look in modern audio. If all we had to use were the tubed wave analyzers of my university lab, a life in audio would be very different and very difficult. But I don't like to use tools without understanding how they work—my physics lecturer at university used to yell that we must always try to examine matters from first principles—so in 1981, when got my first PC, a 6502-based BBC Model B, I wrote a BASIC program to perform FFTs, based on an algorithm I found in a textbook. (The computer took around five minutes to perform a 512-point FFT—debugging the program took forever!) This was the process I followed in that program:

This usefulness of the FFT algorithm—or, more properly, the Discrete Fourier Transform—is everywhere you look in modern audio. If all we had to use were the tubed wave analyzers of my university lab, a life in audio would be very different and very difficult. But I don't like to use tools without understanding how they work—my physics lecturer at university used to yell that we must always try to examine matters from first principles—so in 1981, when got my first PC, a 6502-based BBC Model B, I wrote a BASIC program to perform FFTs, based on an algorithm I found in a textbook. (The computer took around five minutes to perform a 512-point FFT—debugging the program took forever!) This was the process I followed in that program:

My FFT satori was to realize that you needed to restrict, to window, the impulse response, then stitch its end to its beginning.

My FFT satori was to realize that you needed to restrict, to window, the impulse response, then stitch its end to its beginning.

Now you have a continuous wave with a fundamental frequency equal to the reciprocal of the length of the windowed impulse, and the FFT algorithm gives the frequency-domain equivalent of that continuous waveform. However, this was the spectrum I obtained from that program:

Now you have a continuous wave with a fundamental frequency equal to the reciprocal of the length of the windowed impulse, and the FFT algorithm gives the frequency-domain equivalent of that continuous waveform. However, this was the spectrum I obtained from that program:

You get two spectra: one with positive frequencies, corresponding to ei (angular frequency), the other with the same amplitudes of the same frequencies but with a negative sign, corresponding to e–i. You can visualize this as the spectrum being symmetrically "mirrored" on the other side of DC. The negative spectrum is discarded—or, more strictly, you extract the real part of what is a complex solution, and subsequently work with the modulus of the spectrum; ie, the sign is ignored.

You get two spectra: one with positive frequencies, corresponding to ei (angular frequency), the other with the same amplitudes of the same frequencies but with a negative sign, corresponding to e–i. You can visualize this as the spectrum being symmetrically "mirrored" on the other side of DC. The negative spectrum is discarded—or, more strictly, you extract the real part of what is a complex solution, and subsequently work with the modulus of the spectrum; ie, the sign is ignored.

But note the assumptions you have made: 1) the fabricated continuous wave extends to ±Infinity, which is untrue—even a Wagner opera has to eventually end—and 2) what happens at the point where the end of the impulse response is stitched to the beginning? What if there is a discontinuity at that point that mandates you having to apply some sort of mathematical "windowing" function to remove the discontinuity from the impulse response data? (Programs using FFTs should have a "Here Lie Monsters" pop-up when you apply the transform before you've checked that you're using the right window for your intended purpose.) And you have made a value judgment only to use the positive frequencies. While having done so will not matter if, for example, you are concerned only with the baseband behavior of a digital system, it will matter under different circumstances—as I will show when I get on to digital systems.

Note also that the frequency resolution of the spectrum is directly related to the length of the time window you used. If that window is 5 milliseconds in length, the datapoints in the transformed spectrum are spaced at 200Hz intervals, which is not a problem in the treble but a real problem in the midrange and bass.

The title of my lecture is therefore a metaphor: You cannot assume that the assumptions you make as an engineer will be appropriate under all circumstances. You almost need to know the result of a calculation before you perform it.

But note the assumptions you have made: 1) the fabricated continuous wave extends to ±Infinity, which is untrue—even a Wagner opera has to eventually end—and 2) what happens at the point where the end of the impulse response is stitched to the beginning? What if there is a discontinuity at that point that mandates you having to apply some sort of mathematical "windowing" function to remove the discontinuity from the impulse response data? (Programs using FFTs should have a "Here Lie Monsters" pop-up when you apply the transform before you've checked that you're using the right window for your intended purpose.) And you have made a value judgment only to use the positive frequencies. While having done so will not matter if, for example, you are concerned only with the baseband behavior of a digital system, it will matter under different circumstances—as I will show when I get on to digital systems.

Note also that the frequency resolution of the spectrum is directly related to the length of the time window you used. If that window is 5 milliseconds in length, the datapoints in the transformed spectrum are spaced at 200Hz intervals, which is not a problem in the treble but a real problem in the midrange and bass.

The title of my lecture is therefore a metaphor: You cannot assume that the assumptions you make as an engineer will be appropriate under all circumstances. You almost need to know the result of a calculation before you perform it.

I referred earlier to using tubes in my engineering education; pocket calculators were not introduced until after I graduated from university, so my constant companion back then was my slide rule.

My attitude to science was conditioned by my trusty slide rule. Slide rules are elegance personified: not only do you need to have an idea of the order of the answer before you perform the calculation, a slide rule prevents you from getting hung up on irrelevant decimal places in that answer. And you can't forget that, even when you obtain an answer, it is never absolute, but merely a useful approximation.

In physics, you learned to believe one impossible thing before breakfast every day. The strangeness starts when you learn that a coffee cup with a handle and the donut next to it are topologically identical. When you learn that a stream of electrons fired one at a time at a pair of slits in a barrier create the same interference pattern as if they had all arrived simultaneously. And by the time you get to string theory, it's all strange: if you read Leonard Susskind's books, you will find that string theory predicts that every fundamental particle in the universe is represented by a Planck-length tile on the surface of the universe, and that the surface area of the universe just happens to be exactly equal to the sum of the areas of those tiles. Nothing in audio is that strange!

My attitude to science was conditioned by my trusty slide rule. Slide rules are elegance personified: not only do you need to have an idea of the order of the answer before you perform the calculation, a slide rule prevents you from getting hung up on irrelevant decimal places in that answer. And you can't forget that, even when you obtain an answer, it is never absolute, but merely a useful approximation.

In physics, you learned to believe one impossible thing before breakfast every day. The strangeness starts when you learn that a coffee cup with a handle and the donut next to it are topologically identical. When you learn that a stream of electrons fired one at a time at a pair of slits in a barrier create the same interference pattern as if they had all arrived simultaneously. And by the time you get to string theory, it's all strange: if you read Leonard Susskind's books, you will find that string theory predicts that every fundamental particle in the universe is represented by a Planck-length tile on the surface of the universe, and that the surface area of the universe just happens to be exactly equal to the sum of the areas of those tiles. Nothing in audio is that strange!