Case Study 3: Digital Recording & Playback

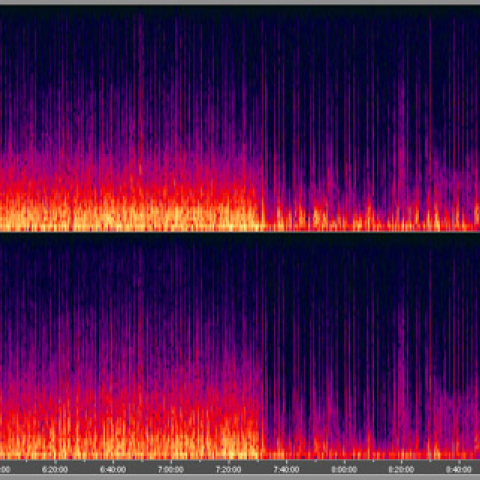

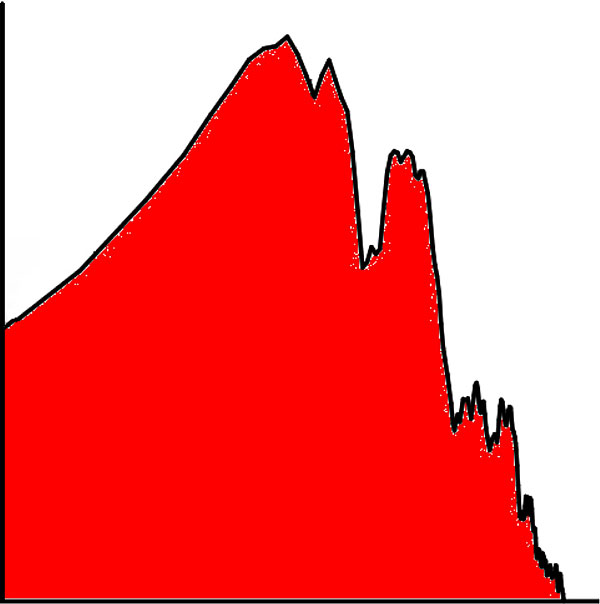

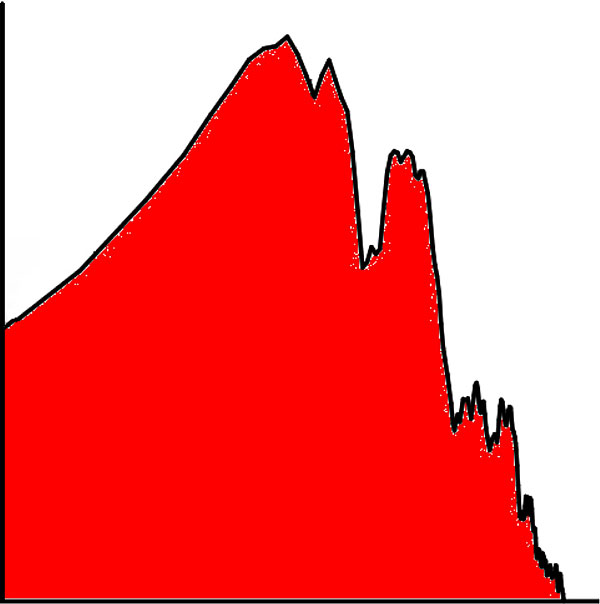

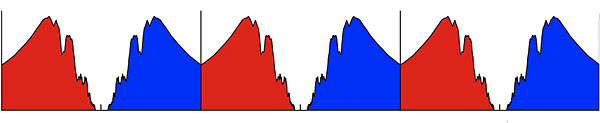

The title of this lecture asks "Where did the negative frequencies go?" Once we enter the world of digital audio, they are very much present. Here is the spectrum of the music waveform I showed earlier: And this is the spectrum of the same signal after it has been sampled in the time domain:

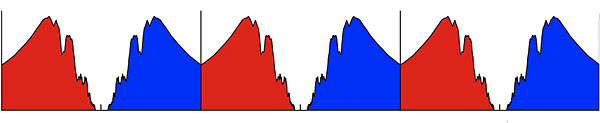

And this is the spectrum of the same signal after it has been sampled in the time domain:

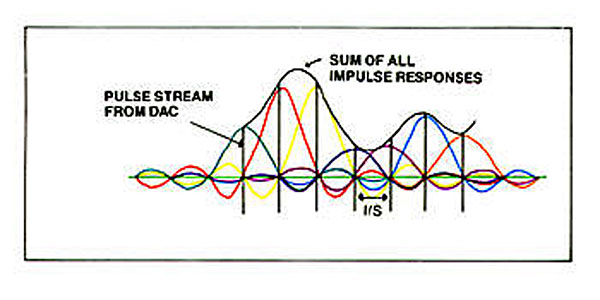

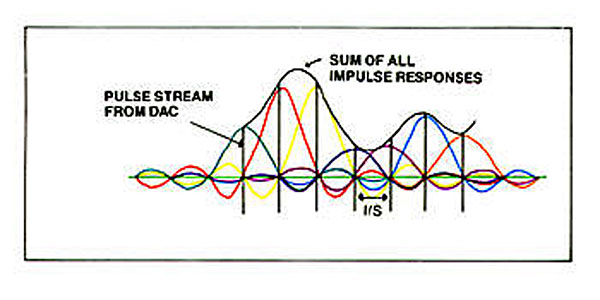

The positive (red) and negative (blue) spectra are mirrored around the sampling frequency and all of its harmonics, the latter extending to, if not infinity, then to something practically close to it. If you wish to play back time-sampled data, you need some way of eliminating all those spectral images other than the one in the baseband. Yes, a low-pass filter is required, but that filter turns out to have a very special function: it doesn't just remove the ultrasonic images, it reconstructs the original analog signal (below the Nyquist Frequency, that is, half the sample rate). The pulses representing the sampled amplitude are convolved with the impulse response of the filter to give the original signal, something that I found elegant in the extreme when I first understood it. That convolving is shown here in a diagram taken from John Watkinson's 1986 book on digital audio:

The positive (red) and negative (blue) spectra are mirrored around the sampling frequency and all of its harmonics, the latter extending to, if not infinity, then to something practically close to it. If you wish to play back time-sampled data, you need some way of eliminating all those spectral images other than the one in the baseband. Yes, a low-pass filter is required, but that filter turns out to have a very special function: it doesn't just remove the ultrasonic images, it reconstructs the original analog signal (below the Nyquist Frequency, that is, half the sample rate). The pulses representing the sampled amplitude are convolved with the impulse response of the filter to give the original signal, something that I found elegant in the extreme when I first understood it. That convolving is shown here in a diagram taken from John Watkinson's 1986 book on digital audio:

I still marvel at the elegance of this concept. But what if you don't use a reconstruction filter? The effect in the audioband is inconsequential—just a small rolloff in the top octave, due to the aperture effect (the pulses have a finite length).

I still marvel at the elegance of this concept. But what if you don't use a reconstruction filter? The effect in the audioband is inconsequential—just a small rolloff in the top octave, due to the aperture effect (the pulses have a finite length).

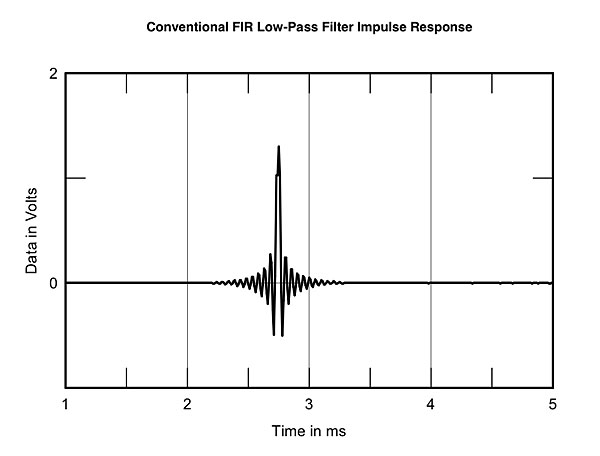

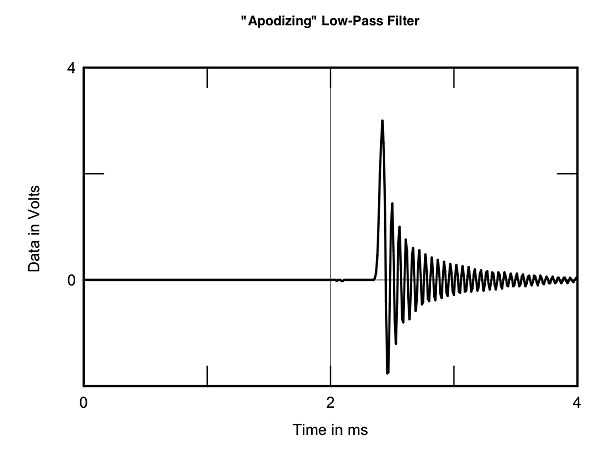

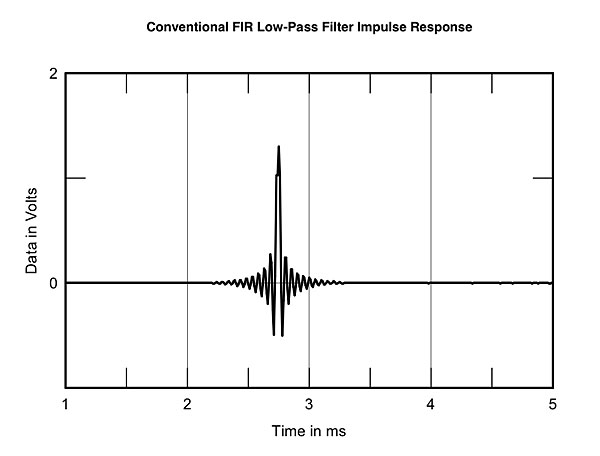

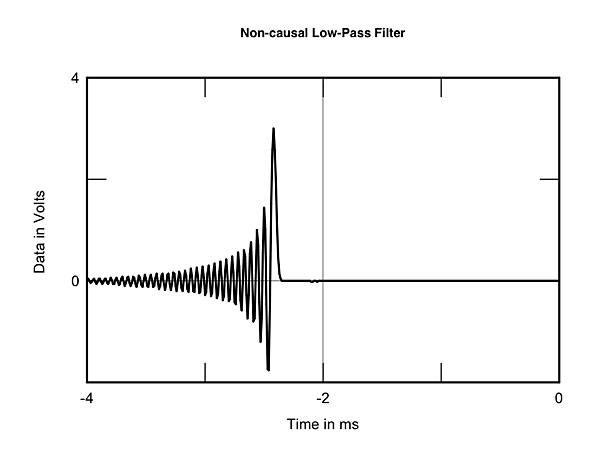

An idea I did find elegant was Peter Craven's introduction of so-called "apodizing" reconstruction filters. Compare the conventional filter's impulse response above with the impulse response of a Craven apodizing filter:

Footnote 7: In subsequent conversations, I have been told that the ear/brain also acts as a wavefront arrival detector, that an acausal filter causes mental confusion as both the initial onset of the ringing and the arrival of the maximum energy peak are incorrectly interpreted as two separate events rather than one.

The title of this lecture asks "Where did the negative frequencies go?" Once we enter the world of digital audio, they are very much present. Here is the spectrum of the music waveform I showed earlier:

And this is the spectrum of the same signal after it has been sampled in the time domain:

And this is the spectrum of the same signal after it has been sampled in the time domain:

The positive (red) and negative (blue) spectra are mirrored around the sampling frequency and all of its harmonics, the latter extending to, if not infinity, then to something practically close to it. If you wish to play back time-sampled data, you need some way of eliminating all those spectral images other than the one in the baseband. Yes, a low-pass filter is required, but that filter turns out to have a very special function: it doesn't just remove the ultrasonic images, it reconstructs the original analog signal (below the Nyquist Frequency, that is, half the sample rate). The pulses representing the sampled amplitude are convolved with the impulse response of the filter to give the original signal, something that I found elegant in the extreme when I first understood it. That convolving is shown here in a diagram taken from John Watkinson's 1986 book on digital audio:

The positive (red) and negative (blue) spectra are mirrored around the sampling frequency and all of its harmonics, the latter extending to, if not infinity, then to something practically close to it. If you wish to play back time-sampled data, you need some way of eliminating all those spectral images other than the one in the baseband. Yes, a low-pass filter is required, but that filter turns out to have a very special function: it doesn't just remove the ultrasonic images, it reconstructs the original analog signal (below the Nyquist Frequency, that is, half the sample rate). The pulses representing the sampled amplitude are convolved with the impulse response of the filter to give the original signal, something that I found elegant in the extreme when I first understood it. That convolving is shown here in a diagram taken from John Watkinson's 1986 book on digital audio:

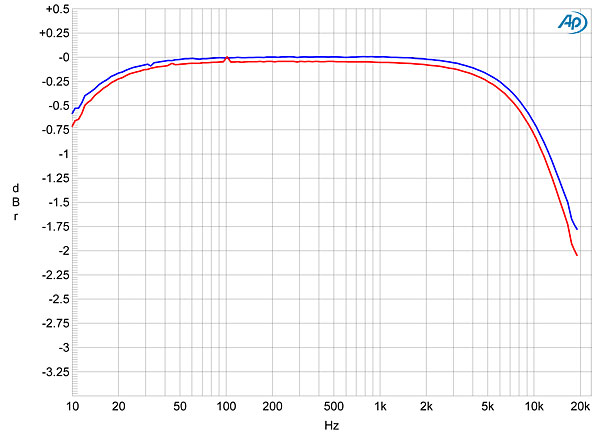

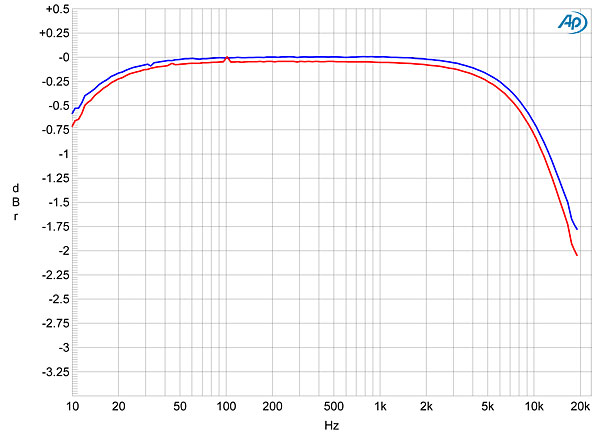

I still marvel at the elegance of this concept. But what if you don't use a reconstruction filter? The effect in the audioband is inconsequential—just a small rolloff in the top octave, due to the aperture effect (the pulses have a finite length).

I still marvel at the elegance of this concept. But what if you don't use a reconstruction filter? The effect in the audioband is inconsequential—just a small rolloff in the top octave, due to the aperture effect (the pulses have a finite length).

NOS DAC with no reconstruction filter, frequency response at 44.1kHz sample rate

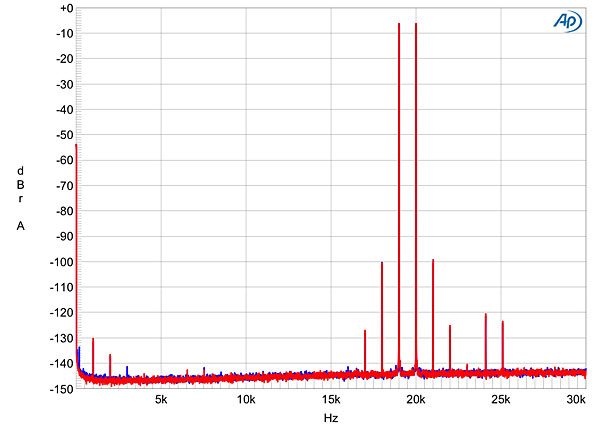

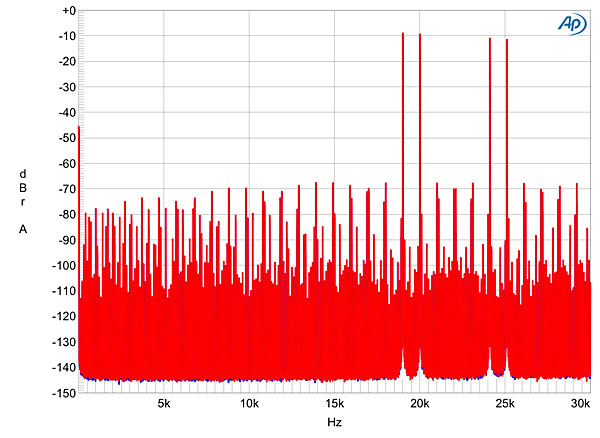

Above the audioband, the conventional reconstruction filter gives a well-behaved analog signal. Reproducing data representing an equal mix of 19 and 20kHz tones, you get a spectrum in which the inverted images of those tones—the negative frequencies—are well suppressed.

DAC with conventional reconstruction filter, spectrum of 19+20kHz tones with a peak level of 0dBFS

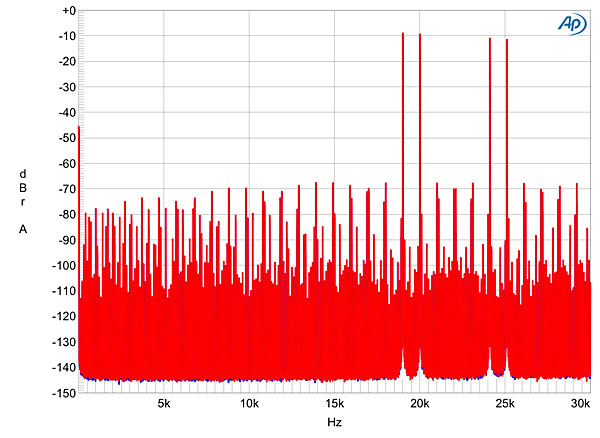

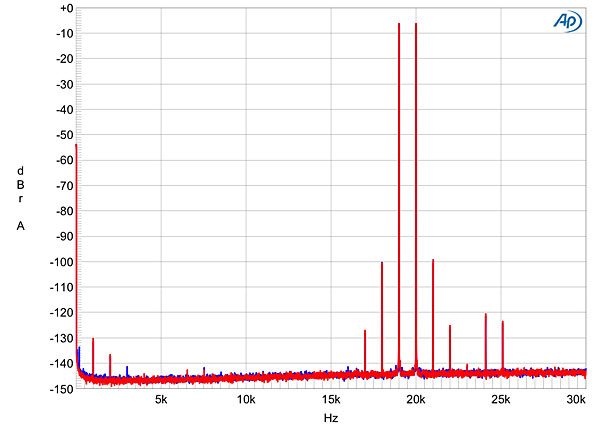

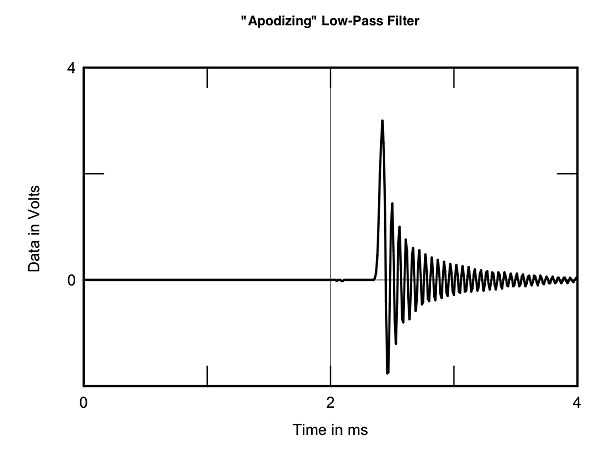

But, my goodness, when we repeat this measurement with a so-called NOS DAC (for Non-OverSampling), which has dispensed with the reconstruction filter, we get this:

NOS DAC with no reconstruction filter, spectrum of 19+20kHz tones with a peak level of 0dBFS

Ugh! There are the negative frequencies in all their glory, as well as a host of related aliasing and intermodulation products dumped back into the audioband.

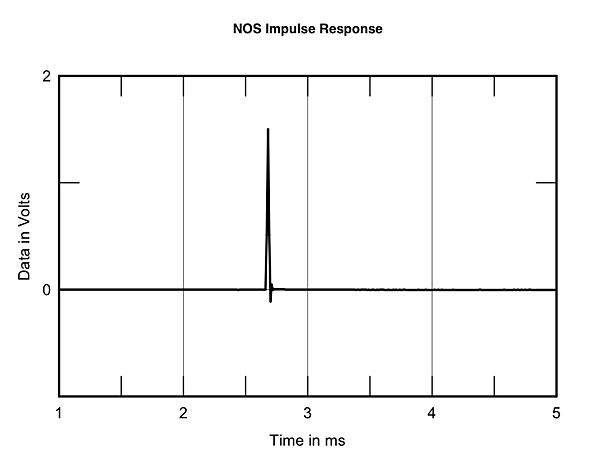

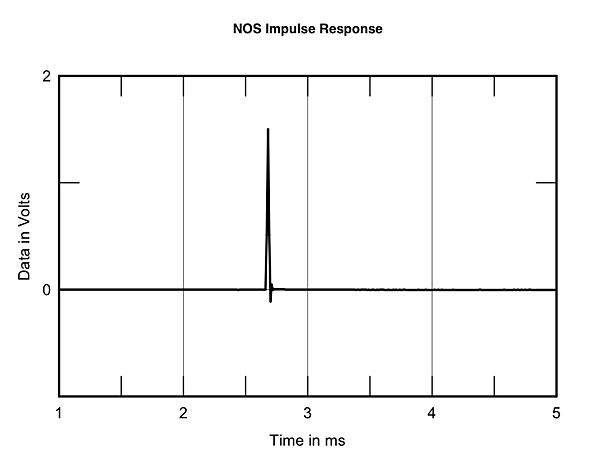

So why do listeners like this mess? It can't be the aperture effect: –3dB at 20kHz is a subtle change at best. Some propose that it is the improved time-domain behavior of the system that the listeners are responding to . . .

NOS DAC with no reconstruction filter, impulse response

. . . compared with the impulse response of a conventional time-symmetrical FIR reconstruction filter:

DAC with conventional reconstruction filter, impulse response

Yet the differences between these two impulses all fall within the ear/brain's integration period. So unless people like the sound of their amplifiers misbehaving with the ultrasonic image energy, I have no idea what is going on here, other than to say that, whatever it is, it is not elegant.

DAC with minimum-phase, apodizing reconstruction filter, impulse response

The acausal ringing of the conventional filter of both the A/D and D/A converters has been replaced by a larger degree of causal ringing—it occurs after the event instead of before and after—at a slightly lower frequency. (The apodizing filter has a null at the original data's Nyquist Frequency.)

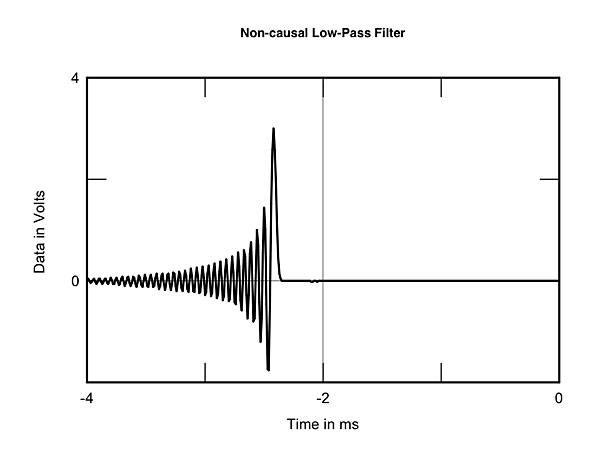

Again, people report that they prefer the sound of apodizing filters. A few years ago I published an article by Keith Howard in which he investigated the behavior of the reconstruction filter. As part of the preparation for that article, Keith sent me DVD-As of music treated with different filters. The recordings weren't identified, but Keith asked some of the magazine's writers to listen to the examples and rank them on sound quality. This was extraordinarily hard to do, but one difference did emerge as being consistently audible under blind conditions. When we were sent the key as to what filters had been used for each example, music reconstructed with the minimum-phase filter above sounded superior to music reconstructed with this filter:

DAC with acausal reconstruction filter, impulse response

Okay—the latter is nothing like we hear in nature. However, why does replacing acausal ringing at a frequency that people can't hear with causal ringing at a slightly lower frequency that people still can't hear result in better sound—er, sound that people tend to like more? Again, as Dick Heyser said, "there are a lot of loose ends!" (footnote 7)

Footnote 7: In subsequent conversations, I have been told that the ear/brain also acts as a wavefront arrival detector, that an acausal filter causes mental confusion as both the initial onset of the ringing and the arrival of the maximum energy peak are incorrectly interpreted as two separate events rather than one.